This article was published as a part of the Data Science Blogathon.

Introduction

Deep learning is the subfield of machine learning which uses a set of neurons organized in layers. A deep learning model consists of three layers: the input layer, the output layer, and the hidden layers. Deep learning offers several advantages over popular machine learning algorithms like k nearest neighbour, support vector machine, linear regression, etc. Unlike machine learning algorithms, deep learning models can create new features from a limited set of information and perform advanced analysis. A deep learning model can learn far more complex features than machine learning algorithms. However, despite its advantages, it also brings several challenges. These challenges include the need for a large amount of data and specialized hardware like GPUs and TPUs.

In this article, we will be creating a deep learning regression model to predict home prices using the famous Boston home price prediction dataset. Not limited to that, we will also compare its results with some renowned machine learning algorithms in various terms.

Boston House Prediction Dataset

The dataset consists of 506 rows with 13 features and a target column, the price column. The dataset is readily available on the internet, and you can use this link to download it. Or it can also be loaded using Keras, as you will see in the below steps.

import pandas as pd import numpy as np # import tensorflow as tf from tensorflow import keras from tensorflow.keras.layers import Conv2D, MaxPooling2D, Dropout, Flatten, Dense

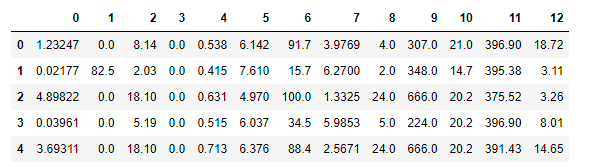

(train_features, train_labels), (test_features, test_labels) = keras.datasets.boston_housing.load_data() pd.DataFrame(train_features).head()

If we load the data from Keras, we will get data in a NumPy array. So, to better look at it, we will convert it to a pandas data frame and see the head of the data frame.

Deep Learning Regression Model

Now we have seen what the dataset looks like; we can start building the deep learning regression model. We will use the TensorFlow library to create the model. As the dataset is not very huge, we can limit the number of layers in the deep learning model and save time. We will use two fully connected layers with the relu activation. The relu activation outputs the input directly if it is greater than 0; otherwise returns zero.

model = keras.Sequential() model.add(Dense(20, activation='relu', input_shape=[len(train_features[0])])) model.add(Dense(1)) # model.compile(optimizer= 'adam', loss='mse', metrics=['mse']) history = model.fit(train_features, train_labels, epochs=20, verbose=0)

In the above code, we have created a feed-forward neural network. We trained the model for 20 epochs and used mean squared error as the loss function with adam optimizer. Now we can predict the results on the test data and compare it with actual values.

from sklearn.metrics import mean_squared_error as mse pred = model.predict(test_features) mse(pred, test_labels)

You can use other values sets like mean absolute error instead of mean squared error or RMS optimizer instead of adam optimizer. You can also tweak the learning rate and number of epochs to achieve better results.

Our deep learning regression model is completed, and as promised, we will compare its results with some popular machine learning algorithms.

K Nearest Neighbours

Firstly, we will train the famous K nearest neighbour regressor data. The algorithm is popular for classification problems but also gives fair results on regression tasks. It calculates the distance between examples present in the training and test sets. And then makes a prediction based on K examples, closest to the problem.

from sklearn.neighbors import KNeighborsRegressor as KNN model_k = KNN(n_neighbors=3) model_k.fit(train_features,train_labels)

pred_k = model_k.predict(test_features) mse(pred_k, test_labels)

Linear Regression

A supervised learning algorithm expects a linear relationship between the input and the output variable. It works on the formula Y = a +bX. Here, X is the explanatory variable, Y is the dependent variable, and b is the slope of the best fit line.

from sklearn.linear_model import LinearRegression model_l = LinearRegression() model_l.fit(train_features, train_labels)

pred_l = model_l.predict(test_features) mse(pred_l, test_labels)

Support Vector Machine

It is a supervised learning algorithm that finds a hyperplane in N-dimensional space and classifies the data points distinctly. A hyperplane is the best line to separate classes and segregate them into N-dimensional space. SVM can also be used with data that is not linearly separable.

from sklearn.svm import SVR model_s = SVR(C=1.0) model_s.fit(train_features, train_labels)

pred_s = model_s.predict(test_features) mse(pred_s, test_labels)

Sklearn also provides a regularization parameter C along with SVM. Regularization prevents the model from learning many complicated features that can result in overfitting the data.

Now comes the exciting part where we will compare every model we have created so far. We will compare the models based on root mean square error.

| Model | Algorithm | MSE(Mean Squared Error) | Time-taken to train(s) |

| model | Feed Forward Neural Network | 119.768 | 2.929 |

| model_k | KNN | 41.428 | 0.008 |

| model_l | Linear Regression | 23.195 | 0.063 |

| model_s | SVM | 66.345 | 0.305 |

From the table, we can derive several conclusions. With a more significant error, the deep learning model took more time to train than the machine learning algorithm. This might be due to the simplicity of the architecture or the lack of training data. The linear regression model gives the slightest mistake, which means a perfect linear relationship between the input and the target variable. I also trained SVM without the regularisation parameter, showing almost the same result. This means all the features in the dataset correlated with the target variable.

Conclusion

Deep learning offers several advantages over machine learning but can’t replace it with simple problems. This article created regression models using both deep learning and simple machine learning algorithms. We saw that training a deep learning model might not be the best choice every time from the results. For the dataset we chose, even simpler machine learning algorithms outperformed. Hence, we can conclude that deep learning should be used only when the simple machine learning algorithms fail to provide satisfactory results.

I hope you enjoyed reading this article!

For more articles – click here.

Please find me on LinkedIn.

The media shown in this article is not owned by Analytics Vidhya and are used at the Author’s discretion.