Exploratory Data Analysis is a process of examining or understanding the data and extracting insights dataset to identify patterns or main characteristics of the data. EDA is generally classified into two methods, i.e. graphical analysis and non-graphical analysis.

EDA is very essential because it is a good practice to first understand the problem statement and the various relationships between the data features before getting your hands dirty.

In this article, EDA notes are essential for summarizing findings. EDA helps uncover patterns, trends, and insights effectively. “eda notes” and “eda” are keywords that guide the analysis process, ensuring a thorough and organized approach to data exploration.

This article was published as a part of the Data Science Blogathon.

Table of contents

Exploratory Data Analysis (EDA)

Technically, The primary motive of EDA is to

- Examine the data distribution

- Handling missing values of the dataset(a most common issue with every dataset)

- Handling the outliers

- Removing duplicate data

- Encoding the categorical variables

- Normalizing and Scaling

Note – Don’t worry if you are not familiar with some of the above terms, we will get to know each one in detail.

Types of Exploratory Data Analysis

Univariate Analysis

Univariate analysis focuses on analyzing a single variable at a time. It aims to describe the data and find patterns rather than establish causation or relationships. Techniques used include:

- Descriptive statistics (mean, median, mode, standard deviation, etc.)

- Frequency distributions (histograms, bar graphs, etc.)

Bivariate Analysis

Bivariate analysis explores relationships between two variables. It helps find correlations, relationships, and dependencies between pairs of variables. Techniques include:

- Scatter plots

- Correlation analysis

Multivariate Analysis

Multivariate analysis extends bivariate analysis to include more than two variables. It focuses on understanding complex interactions and dependencies between multiple variables. Techniques include:

- Heat maps

- Scatter plot matrices

- Principal Component Analysis (PCA)

Understanding EDA

To understand the steps involved in Exploratory Data Analysis, we will use Python as the programming language and Jupyter Notebooks because it’s open-source, and not only it’s an excellent IDE but also very good for visualization and presentation.

Step 1

First, we will import all the pythonStep 2 libraries that are required for this, which include NumPy for numerical calculations and scientific computing, Pandas for handling data, and Matplotlib and Seaborn for visualization.

Step 2

Then we will load the data into the Pandas data frame. For this analysis, we will use a dataset of “World Happiness Report”, which has the following columns: GDP per Capita, Family, Life Expectancy, Freedom, Generosity, Trust Government Corruption, etc. to describe the extent to which these factors contribute to evaluating the happiness.

You can find this dataset over here.

Step 3

We can observe the dataset by checking a few of the rows using the head() method, which returns the first five records from the dataset.

Step 4

Using shape, we can observe the dimensions of the data.

Step 5

info() method shows some of the characteristics of the data such as Column Name, No. of non-null values of our columns, Dtype of the data, and Memory Usage.

Python Code:

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import seaborn as sns

happinessData = pd.read_csv('happiness.csv')

print(happinessData.head())

print("-------------------------")

print("-------------------------")

print(f"Shape of the data: {happinessData.shape}")

print("-------------------------")

print("-------------------------")

print(happinessData.info())From this, we can observe, that the data which we have doesn’t have any missing values. We are very lucky in this case, but in real-life scenarios, the data usually has missing values which we need to handle for our model to work accurately. (Note – Later on, I’ll show you how to handle the data if it has missing values in it)

Step 6

We will use describe() method, which shows basic statistical characteristics of each numerical feature (int64 and float64 types): number of non-missing values, mean, standard deviation, range, median, 0.25, 0.50, 0.75 quartiles.

Step 7

Handling missing values in the dataset. Luckily, this dataset doesn’t have any missing values, but the real world is not so naive as our case.

So I have removed a few values intentionally just to depict how to handle this particular case.

We can check if our data contains a null value or not by the following command

As we can see that “Happiness Score” and “Freedom” features have 1 missing values each.

How to handle the missing values by using a few techniques

- Drop the missing values – If the dataset is huge and missing values are very few then we can directly drop the values because it will not have much impact.

- Replace with mean values – We can replace the missing values with mean values, but this is not advisable in case if the data has outliers.

- Replace with median values – We can replace the missing values with median values, and it is recommended in case if the data has outliers.

- Replace with mode values – We can do this in the case of a Categorical feature.

- Regression – It can be used to predict the null value using other details from the dataset.

For our case, we will handle missing values by replacing them with the median value.

And, now we can again check if the missing values have been handled or not.

And, now we can see that our dataset doesn’t have any null values now.

Step 8

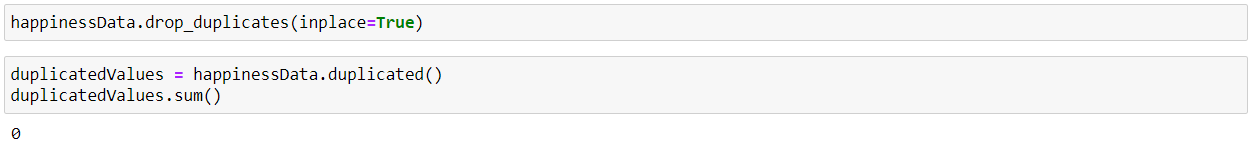

We can check for duplicate values in our dataset as the presence of duplicate values will hamper the accuracy of our ML model.

We can remove duplicate values using drop_duplicates()

As we can see that the duplicate values are now handled.

Step 9

Handling the outliers in the data, i.e. the extreme values in the data. We can find the outliers in our data using a Boxplot.

As we can observe from the boxplot that the normal range of data lies within the block, and small circles at the extreme ends of the graph denote the outliers.

So to handle it we can either drop the outlier values or replace the outlier values using IQR(Interquartile Range Method).

In Exploratory Data Analysis, we calculate the IQR to identify patterns by finding the difference between the 25th and 75th percentiles of the data. The percentiles can be calculated by sorting the selecting values at specific indices. The IQR is used to identify outliers by defining limits on the sample values that are a factor k of the IQR. The common value for the factor k is the value 1.5.

Now we can again plot the boxplot and check if the outliers have been handled or not.

Finally, we can observe that our data is now free from outliers.

Step 10

Normalizing and Scaling – Data Normalization or feature scaling is a process to standardize the range of features of the data as the range may vary a lot. So we can preprocess the data using ML algorithms. So for this, we will use StandardScaler for the numerical values, which uses the formula as x-mean/std deviation.

As we can see that the “Happiness Score” column has been normalized.

Step 11

We can find the pairwise correlation between the different columns of the data using the corr() method. (Note – All non-numeric data type column will be ignored.)

“happinessData.corr()” to find the pairwise correlation of all columns in the data frame. The function automatically excludes any ‘nan’ values.

The resulting coefficient is a value between -1 and 1 inclusive, where:

- 1: Total positive linear correlation

- 0: No linear correlation, the two variables most likely do not affect each other

- -1: Total negative linear correlation

Pearson Correlation is the default method of the function “corr”.

Now, we will create a heatmap using Seaborn to visualize the correlation between the different columns of our data:

As we can observe from the above heatmap of correlations, there is a high correlation between –

- Happiness Score – Economy (GDP per Capita) = 0.78

- Happiness Score – Family = 0.74

- Happiness Score – Health (Life Expectancy) = 0.72

- Economy (GDP per Capita) – Health (Life Expectancy) = 0.82

Step 12

Now, using Seaborn, we will visualize the relation between Economy (GDP per Capita) and Happiness Score by using a regression plot. And as we can see, as the Economy increases, the Happiness Score increases as well as denoting a positive relation.

Now, we will visualize the relation between Family and Happiness Score by using a regression plot.

Now, we will visualize the relation between Health (Life Expectancy) and Happiness Score by using a regression plot. And as we can see that, as Happiness is dependent on health, i.e. Good Health is equal to More Happy a person is.

Now, we will visualize the relation between Freedom and Happiness Score by using a regression plot. And as we can see that, as the correlation is less between these two parameters so the graph is more scattered and the dependency is less between the two.

I hope we all now have a basic understanding of how to perform Exploratory Data Analysis(EDA).

Hence, the above are the steps that I personally follow for Exploratory Data Analysis, but there are various other plots and commands, which we can use to explore more into the data.

Conclusion

Exploratory Data Analysis (EDA) includes examining datasets to discover patterns through Univariate, Bivariate, and Multivariate analysis techniques. These methods concentrate on individual, paired, and multiple variables, in that order. Exploratory Data Analysis (EDA) also involves dealing with missing values, which analysts typically address using methods such as mean or median imputation and predictive modeling to ensure data integrity.

The media shown in this article are not owned by Analytics Vidhya and is used at the Author’s discretion.

A. The .corr() method in Exploratory Data Analysis (EDA) serves the purpose of calculating the pairwise correlation between all columns in a dataset. This method helps identify relationships and dependencies between variables, which is essential for understanding the data’s structure and underlying patterns. By examining the correlation coefficients, analysts can determine how strongly pairs of variables are related, aiding in feature selection and the development of predictive models.

A. The key aspects of Exploratory Data Analysis (EDA) include:Data Distribution Examination: Analyzing how data is distributed to identify patterns and trends.

Handling Missing Values: Addressing gaps in the dataset using various techniques to maintain data integrity.

Outlier Detection: Identifying outliers that may skew results or indicate significant anomalies.

Data Cleaning: Removing duplicates and correcting inconsistencies to prepare the dataset for analysis.

Encoding Categorical Variables: Converting categorical data into numerical formats for better analysis.

Normalization and Scaling: Adjusting data scales to ensure equal contribution to analyses, especially in distance-based algorithms.

Graphical and Non-Graphical Analysis: Utilizing both visual methods (like histograms) and statistical summaries to explore data comprehensively.

Correlation Analysis: Understanding relationships between variables to identify potential predictors for modeling.