Data Augmentation (DA) Technique, implemented through tools like TextAttack, is a process that enables us to artificially increase training data size by generating different versions of real datasets without actually collecting the data. The data needs to be changed to preserve the class categories for better performance in the classification task.

This article was published as a part of the Data Science Blogathon.

We use data augmentation strategies in Computer Vision and Natural Language Processing (NLP) to address data scarcity and insufficient data diversity. While creating augmented images is relatively easy, the complexities inherent in language make it more challenging in Natural Language Processing. We can not replace every word with its synonym, and even if we replace it, the context will be different.

Data augmentation increases the training data size, which improves the model’s performance. More data we have better is the performance of the model. The distribution of augmented data generated should neither be too similar nor too different from the original. This may lead to overfitting or poor performance through Effective DA approaches should aim for a balance.

While Data Augmentation techniques are used in Computer Vision and NLP, this tutorial focuses on the use of Data augmentation in NLP. In this tutorial, We will demonstrate the improved NLP model performance with this technique.

Data Augmentation techniques are applied on below three levels :

- Character Level

- Word Level

- Phrase Level

- Document Level

Data Augmentation Techniques for Text Classification

Easy Data augmentation (EDA)

In this technique, the system randomly selects a word from the sentence and replaces it with a synonym or swaps two words in the sentence. EDA techniques include:

- Synonym replacement

Word Embedding based Replacement: We can use pre-trained word embeddings like GloVe, Word2Vec, and fastText to find the nearest word vector in the embedding space and replace words in the original sentence.

Contextual Bidirectional embedding like ELMo, BERT can be used for more reliability as its vector representation is much richer. As Bi-LSTM & Transformer based models encode longer text sequences & are contextually aware of their surrounding words.

Lexical based Replacement: Wordnet is a lexical database for English that has meanings of words, hyponyms, other semantic relations, etc. We can use WordNet to find synonyms for a given token/word in the original sentence to replace it. Additionally, we can use NLP Python packages like NLTK and SpaCy to find and replace synonyms in the original sentence.

- Random Insertion: Inserting this identified synonym at some random position in the sentence and this word is not in stopwords.

- Random Deletion: Randomly removing words within the sentence.

- Random Swapping: Randomly choose two words within the sentence and swap their positions.

Backtranslation

A sentence is translated in one language and then a new sentence is translated again in the original language. So, different sentences are created.

Generative Models

Train a generative adversarial network (GAN) to generate text with a few words, and we can use generative language models like BERT, RoBERTa, BART, and T5 to generate text in a manner that better preserves class categories. The generative model encodes the class category along with its associated text sequences to generate newer samples with some modifications. This approach is usually more reliable and the sample generated is more representative of the associated class category.

Libraries for Data Augmentation

Few libraries are listed below available for Data Augmentation

- Textattack

- Nlpaug

- Googletrans

- NLP Albumentations

- TextAugment

Below we will demonstrate Data Augmentation with TextAttack library.

TextAttack Library Introduction and Data Augmentation Sample

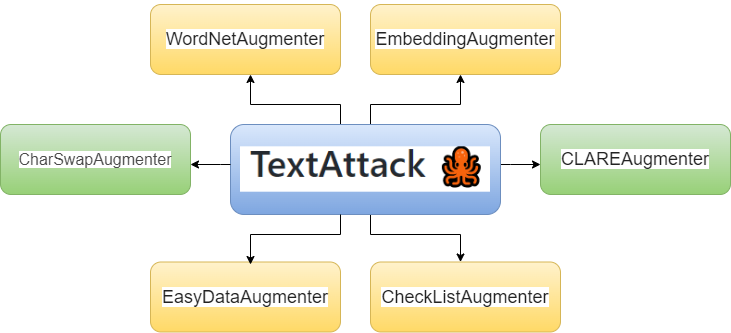

source: Author

TextAttack is a Python framework. It is used for adversarial attacks, adversarial training, and data augmentation in NLP. In this article, we will focus only on text data augmentation.

The textattack.Augmenter class in textattack provides six different methods for data augmentation.

- WordNetAugmenter

- EmbeddingAugmenter

- CharSwapAugmenter

- EasyDataAugmenter

- CheckListAugmenter

- CLAREAugmenter

Let’s look at the data augmentation examples using these six methods.

Textattack installation

!pip install textattackWordNetAugmenter

Wordnet augments text by replacing words with synonyms provided by WordNet.

from textattack.augmentation import WordNetAugmenter

text = "start each day with positive thoughts and make your day"

wordnet_aug = WordNetAugmenter()

wordnet_aug.augment(text)Output : [‘start each day with positive thoughts and induce your day’]

EmbeddingAugmenter

Embedding augments text by replacing words with neighbors in the counter-fitted embedding space, with a constraint to ensure their cosine similarity is at least 0.8.

from textattack.augmentation import EmbeddingAugmenter

embed_aug = EmbeddingAugmenter()

embed_aug.augment(text)Output : [‘start each day with positive idea and make your day’]

EasyDataAugmenter

EDA augments text with a combination of word insertions, substitutions, and deletions.

from textattack.augmentation import EasyDataAugmenter

eda_aug = EasyDataAugmenter()

eda_aug.augment(text)Output :

['start each day with positive thoughts make your day',

'start each day with positive thoughts and form your day',

'start each day with positive thoughts and make your daytime day',

'start make day with positive thoughts and each your day'']CharSwapAugmenter

It augments text by substituting, deleting, inserting, and swapping adjacent characters

from textattack.augmentation import CharSwapAugmenter

charswap_aug = CharSwapAugmenter()

charswap_aug.augment(text)Output : [‘start each day with positive thoughts and amke your day’]

CheckListAugmenter

It augments text by contraction/extension and by substituting names, locations and numbers.

from textattack.augmentation import CheckListAugmenter

checklist_aug = CheckListAugmenter()

checklist_aug.augment(text)Output : [‘start each day with positive thoughts and make your day’]

CLAREAugmenter

It augments text by replacing, inserting, and merging with a pre-trained masked language model.

from textattack.augmentation import CLAREAugmenter

clare_aug = CLAREAugmenter()

print(clare_aug.augment(text))Output : [‘start each day with positive thoughts and make your finest day’]

Below we will compare model performance with and without data augmentation

#import required libraries

import pandas as pd

import random

import re

import string

import nltk

from nltk.stem.wordnet import WordNetLemmatizer

nltk.download('punkt')

nltk.download('wordnet')

from sklearn.feature_extraction.text import CountVectorizer

from nltk.corpus import stopwords

from sklearn.naive_bayes import MultinomialNB

from sklearn.model_selection import RepeatedStratifiedKFold

from sklearn.model_selection import cross_val_score#Read the dataset

data_fileTr = "nlp-getting-started/train.csv"

train_data = pd.read_csv(data_fileTr)

train_data.shapeOutput : (7613, 5)

clean_text function shown below preprocess the text data provided.

def clean_text(text):

# lower text

text = text.lower()

#removing stop words

text = ' '.join([e_words for e_words in text.split(' ') if e_words not in stopwords.words('english')])

#removing square brackets

text=re.sub('[.*?]', '', text)

text=re.sub('+', '', text)

#removing hyperlink

text= re.sub('https?://S+|www.S+', '', text)

#removing puncuation

text=re.sub('[%s]' % re.escape(string.punctuation), '', text)

text = re.sub('n' , '', text)

#remove words containing numbers

text=re.sub('w*dw*' , '', text)

#tokenizer

text = nltk.word_tokenize(text)

#lemmatizer

wn = nltk.WordNetLemmatizer()

text = [wn.lemmatize(word) for word in text]

text = " ".join(text)

return text

train_data["text"]= train_data["text"].apply(clean_text)In Machine learning algorithms mostly take numeric feature vectors as input. Thus, when working with text data, need to convert each document into a numeric vector using CountVectorizer.

count_vectorizer = CountVectorizer()

train_vectors_counts = count_vectorizer.fit_transform(train_data["text"])

train_vectors_counts.shapeOutput : (7613, 16520)

# Fitting a simple Multinomial Naive Bayes model

mnb = MultinomialNB()

cv = RepeatedStratifiedKFold(n_splits=5, n_repeats=3, random_state=1)

print("Mean Accuracy: {:.2}".format(cross_val_score(mnb, train_vectors_counts, train_data["target"], cv=cv).mean()))Output : (7613, 16520)

The below function takes text, label, and textattack augmenter as input and returns a list of augmented data with their corresponding labels.

def textattack_data_augment(data, target, textAttack_augmenter):

aug_data = []

aug_label = []

for text, label in zip(data, target):

if random.randint(0,2) != 1:

aug_data.append(text)

aug_label.append(label)

continue

aug_list = textAttack_augmenter.augment(text)

aug_data.append(text)

aug_label.append(label)

aug_data.extend(aug_list)

aug_label.extend([label]*len(aug_list))

return aug_data, aug_labelHere, for Data Augmentation we are configuring EmbeddedAumenter which will further pass to the function listed above.

embed_aug = EmbeddingAugmenter(pct_words_to_swap=0.1, transformations_per_example=1)

aug_data, aug_lable = textattack_data_augment(train_data["text"], train_data["target"], embed_aug)Here we are cleaning the text using text cleaning function and countvectorizer

clean_aug_data = [text_cleaning(txt) for txt in aug_data]

count_vect = CountVectorizer()

aug_data_counts = count_vect.fit_transform(clean_aug_data)

aug_data_counts.shapeOutput : (10254, 17912)

print("Mean Accuracy: {:.2}".format(cross_val_score(mnb, aug_data_counts, aug_lable, cv=cv).mean()))Output : Mean Accuracy: 0.83

Accuracy Comparison with & without DA and DA Benefits

Output accuracy has improved from 0.79 to 0.83 by using the Data Augmentation technique.

Data Augmentation benefits as below:

Data augmentation reduces the costs of collecting and labeling data

Improves model prediction accuracy by

the increasing training data size for the models

preventing data scarcity for better models

reducing the data overfitting and creating variability in data

the increasing generalization ability of the models

resolving the data imbalance problem in the classification task

Conclusion

In this article, I give an overview of how various Data Augmentation techniques work and demonstrate how to use them to increase training data size and improve ML model performance. We have covered 6 textAttack recipes along with examples.

The media shown in this article is not owned by Analytics Vidhya and are used at the Author’s discretion.