This article was published as a part of the Data Science Blogathon.

Introduction on 3D-CNN

The MNIST dataset classification is considered the hello world program in the domain of computer vision. The MNIST dataset helps beginners to understand the concept and the implementation of Convolutional Neural Networks. Many think of images as just a normal matrix but in reality, this is not the case. Images possess what is known as spatial information. Consider a 3X3 matrix shown below.

In a regular matrix, the values in the matrix will be independent of each other. The neighboring values will not carry any relation or information for a specific field in the matrix. For example, the value present in place of “e” in the matrix will have no connection to the values present in other positions like “a”, “b”, etc. This is not the case in an image.

In an image, each position in the matrix represents a pixel in the image and the value present in each position represents the value of the pixel. The pixel value can be from [0-255] in an 8-bit image. Each pixel has some relation to its neighboring pixels. The neighborhood of any pixel is the set of pixels that are present around it. For any pixel, there are 3 ways to represent its neighborhood known as N-4, N-D, and N-8. Let’s understand them in detail.

- N-4: It represents the pixels that are present in the top, bottom, right, and left of the reference pixel. For pixel “e”, the N-4 contains “b”, “f”, “h”, and “d”.

- N-D: It represents the pixels that are present diagonally accessible from the reference pixel. For pixel “e”, the N-D contains “a”, “c”, “i”, and ‘g’.

- N-8: It represents all the pixels that are present around it. It includes both N-4 and N-D pixels. For pixel “e”, the N-8 contains “a”, “b”, “c”, “d”, “f”, “g”, “h”, and “i”.

The N-4, N-8 and N-D pixels help in extracting information about a pixel. For example, these parameters can be used to classify a pixel as either a border or an interior or an exterior pixel. This is the specialty of images. An ANN accepts input as a 1D array. An image is always present in a 2D array, with 1 or more channels. When an image array is transformed into a 1D array, it losses spatial information and so, an ANN fails to capture this information and performs poorly on an image dataset. This is where a CNN excels. A CNN accepts a 2D array as input and performs a convolution operation using a mask (or a filter or a kernel) and extracts these features. A process known as pooling is performed which reduces the number of features extracted and reduces the computational complexity. After performing these operations, we convert the extracted features into a 1D array and feed it to the ANN part that learns to perform classification.

The article aims to extend the concept of convolution operation on 3D data. We will be building a 3D CNN which will perform the classification on the 3D MNIST dataset. You can download the dataset from here.

Overview of the Dataset

We will be using the fulldatasetvectors.h5 file in the dataset. This file has 4096-D vectors obtained from the voxelization(x:16, y:16, z:16) of all the 3D point clouds. This file contains 10000 training and 2000 test samples. The dataset has point cloud data as well which can be used. A detailed explanation of the dataset is available here. Please feel free to read more about the dataset before proceeding.

Importing Modules

Importing Modules

As the data is stored in h5 format, we will be using the h5py module for loading the dataset from the data from the fulldatasetvectors file. TensorFlow and Keras will be used for building and training the 3D-CNN. The to_categorical function helps in performing one-hot encoding of the target variable. We will also be using earlystopping callback to stop the training and prevent overfitting of the model.

import numpy as np import h5py from tensorflow.keras.utils import to_categorical from tensorflow.keras import layers from tensorflow.keras.models import Sequential from tensorflow.keras.initializers import Constant from tensorflow.keras.optimizers import Adam from tensorflow.keras.callbacks import EarlyStopping

Loading the Dataset

As mentioned earlier, we will be loading data from the fulldatasetvectors.h5 file using the h5py module.

We can see that the train data has 10000 samples while the test data has 2000 samples and each sample comprises 4096 features.

train shape: (10000, 4096) test shape: (2000, 4096)

Building the 3D-CNN

The 3D-CNN, just like any normal CNN, has 2 parts – the feature extractor and the ANN classifier and performs in the same manner. The 3D-CNN, unlike the normal CNN, performs 3D convolution instead of 2D convolution. We will be using the sequential API from Keras for building the 3D CNN. The first 2 layers will be the 3D convolutional layers with 32 filters and ReLU as the activation function followed by a max-pooling layer for dimensionality reduction. There is also a bias term added to these layers with a value of 0.01. By default, the bias value is set to 0. The same set of layers is again used but with 64 filters. This is then followed by a dropout layer and a flatten layer. The flatten layer helps in reshaping the features into a 1D array which can be processed by an ANN, i.e., dense layers. The ANN part consists of 2 layers, with 256 and 128 neurons respectively, and ReLU as the activation function. This is then followed by an output layer with 10 neurons as there are 10 different classes or labels present in the dataset.

model = Sequential() model.add(layers.Conv3D(32,(3,3,3),activation='relu',input_shape=(16,16,16,1),bias_initializer=Constant(0.01))) model.add(layers.Conv3D(32,(3,3,3),activation='relu',bias_initializer=Constant(0.01))) model.add(layers.MaxPooling3D((2,2,2))) model.add(layers.Conv3D(64,(3,3,3),activation='relu')) model.add(layers.Conv3D(64,(2,2,2),activation='relu')) model.add(layers.MaxPooling3D((2,2,2))) model.add(layers.Dropout(0.6)) model.add(layers.Flatten()) model.add(layers.Dense(256,'relu')) model.add(layers.Dropout(0.7)) model.add(layers.Dense(128,'relu')) model.add(layers.Dropout(0.5)) model.add(layers.Dense(10,'softmax')) model.summary()

This is the architecture of the 3D-CNN.

Model: "sequential_2" _________________________________________________________________ Layer (type) Output Shape Param # ================================================================= conv3d_5 (Conv3D) (None, 14, 14, 14, 32) 896 _________________________________________________________________ conv3d_6 (Conv3D) (None, 12, 12, 12, 32) 27680 _________________________________________________________________ max_pooling3d_2 (MaxPooling3 (None, 6, 6, 6, 32) 0 _________________________________________________________________ conv3d_7 (Conv3D) (None, 4, 4, 4, 64) 55360 _________________________________________________________________ conv3d_8 (Conv3D) (None, 3, 3, 3, 64) 32832 _________________________________________________________________ max_pooling3d_3 (MaxPooling3 (None, 1, 1, 1, 64) 0 _________________________________________________________________ dropout_4 (Dropout) (None, 1, 1, 1, 64) 0 _________________________________________________________________ flatten_1 (Flatten) (None, 64) 0 _________________________________________________________________ dense_3 (Dense) (None, 256) 16640 _________________________________________________________________ dropout_5 (Dropout) (None, 256) 0 _________________________________________________________________ dense_4 (Dense) (None, 128) 32896 _________________________________________________________________ dropout_6 (Dropout) (None, 128) 0 _________________________________________________________________ dense_5 (Dense) (None, 10) 1290 ================================================================= Total params: 167,594 Trainable params: 167,594 Non-trainable params: 0

Training the 3D-CNN

We will be using Adam as the optimizer. Categorical cross-entropy will be used as the loss function for training the model as it is a multiclass classification. The accuracy will be used as the loss metric for training. As mentioned earlier, the Earlystopping callback will be used when training the model along with the dropout layers. The Earlystopping callback helps in stopping the training process once any parameter like loss or accuracy does not improve over a certain number of epochs, which in turn helps in preventing the overfitting of the model. Dropouts help prevent the overfitting of the model by randomly turning off some neurons when training and making the model learn and not memorize. The dropout value should not be too high as it might lead to the underfitting of the model, which is not ideal.

model.compile(Adam(0.001),'categorical_crossentropy',['accuracy']) model.fit(xtrain,ytrain,epochs=200,batch_size=32,verbose=1,validation_data=(xtest,ytest),callbacks=[EarlyStopping(patience=15)])

These are some epochs of training the 3D-CNN.

Epoch 1/200 313/313 [==============================] - 39s 123ms/step - loss: 2.2782 - accuracy: 0.1237 - val_loss: 2.1293 - val_accuracy: 0.2235 Epoch 2/200 313/313 [==============================] - 39s 124ms/step - loss: 2.0718 - accuracy: 0.2480 - val_loss: 1.8067 - val_accuracy: 0.3395 Epoch 3/200 313/313 [==============================] - 39s 125ms/step - loss: 1.8384 - accuracy: 0.3382 - val_loss: 1.5670 - val_accuracy: 0.4260 ... ... Epoch 87/200 313/313 [==============================] - 39s 123ms/step - loss: 0.7541 - accuracy: 0.7327 - val_loss: 0.9970 - val_accuracy: 0.7061

Testing the 3D-CNN

The 3D-CNN achieves a decent accuracy of 73.3% on the train and 70.6% on the test data. The accuracy might be slightly on the lower side as the dataset is quite small and not balanced.

_, acc = model.evaluate(xtrain, ytrain)

print('training accuracy:', str(round(acc*100, 2))+'%')

_, acc = model.evaluate(xtest, ytest)

print('testing accuracy:', str(round(acc*100, 2))+'%')

313/313 [==============================] - 11s 34ms/step - loss: 0.7541 - accuracy: 0.7327 training accuracy: 73.27% 63/63 [==============================] - 2s 34ms/step - loss: 0.9970 - accuracy: 0.7060 testing accuracy: 70.61%

Conclusion

To sum it up, this article covered the following topics:

- Neighbors of a pixel in an image

- Why an ANN performs poorly on an image dataset

- Differences between a CNN and an ANN

- Working of CNN

- Building and training a 3D-CNN in TensorFlow

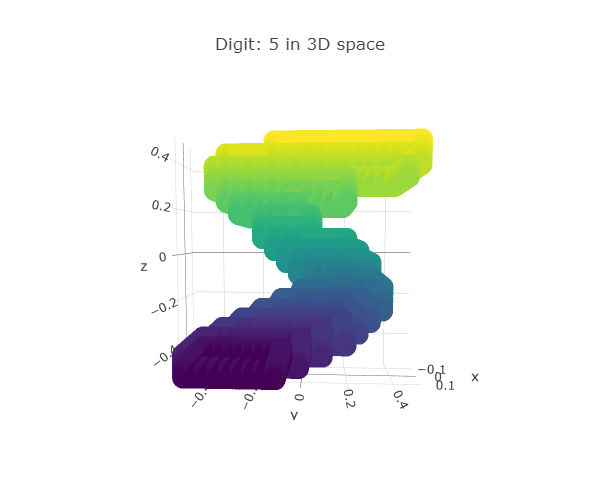

To continue this project further, a new customized 3D dataset can be created from the MNIST dataset by projecting the pixel values on another axis. The x-axis and y-axis will remain the same as in any image but the pixel values will be projected on the z-axis. This transformation of creating 3D data from 2D data can be applied after performing image augmentation so that we have a well-balanced and generalized dataset that can be used to train the 3D-CNN and achieve better accuracy. That’s the end of this article. Hope you enjoyed reading this article.

Thanks for reading and happy learning!

The media shown in this article is not owned by Analytics Vidhya and is used at the Author’s discretion.