This article was published as a part of the Data Science Blogathon.

Introduction

Amazon Athena is an interactive query service based on open-source Apache Presto that allows you to analyze data stored in Amazon S3 using ANSI SQL directly. In addition, it is serverless, so there is no infrastructure to manage and maintain, and you only pay for the queries you run.

.png)

Differentiating Athena from Database and Warehouse

Athena works more like a query engine than a particular database. This means that:

- Compute and storage are decoupled: Databases store data at rest and provide the resources needed to perform queries and calculations. Each of these comes with direct and indirect overhead costs. It does not store data – instead, storage is managed entirely on Amazon S3. The Athena query service is fully managed, so resources are automatically allocated by AWS as needed to execute a query.

- No DML interface: No need to model data with Athena. I/O is the bottleneck of virtually every database, but it’s not a problem with Athena. And since you don’t have to waste I/O bandwidth on data modelling, you can focus all computing resources on query processing.

Advantages of Using Athena

Serverless Design Reduces IT Overhead: Amazon Athena is serverless, meaning there is no user-side infrastructure to manage or configure. Using Athena is as simple as defining a query, and you only pay for the questions you run. As a result, there are no additional IT costs and no clusters to manage.

- Based on SQL: You can use Athena to run SQL queries against the desired table that is configured in the Glue data catalogue or data sources that you can connect to using the Athena Query Federation SDK. For users who already know SQL, there is no learning curve to get started.

- Open architecture (no vendor lock-in): Athena enables open access to data rather than lock-in to a specific tool or technology. This manifests itself in various ways;

- Ubiquitous Access: Because your data is stored in an S3 bucket and the schema is defined in the Glue Data Catalog, you can switch between query engines that can read from these sources without redefining the schema or creating a separate copy of the data.

- Separated storage and computing resources: Athena has a complete separation of computing and memory resources. Data is stored in your Amazon S3 account, while Amazon Web Services provide Athena computation as a shared resource among all Athena users.

- Open file formats: Unlike many high-performance databases, Athena does not use a proprietary file format but supports standard open source formats such as Apache Parquet, ORC, CSV, and JSON.

- Low cost: Athena’s pricing model is based on terabytes of scanned data. You can control and keep costs down by checking only the data you need to answer a specific query (this can be done using data splitting – see below).

- Access to all your data: Most organizations process only 30 to 35 percent of their data into a traditional data warehouse due to the high operational and infrastructure costs of constantly resizing database clusters. Because this storage costs a fraction of what you would pay to keep the same data in a data warehouse, you can handle larger volumes of data without worry.

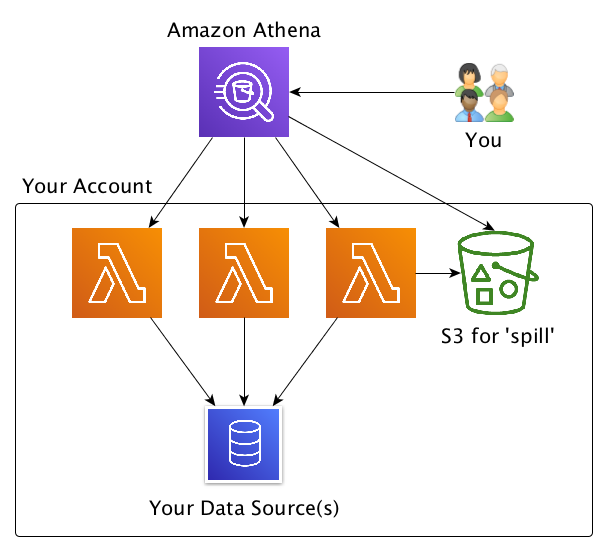

- Custom Connectors: Amazon Athena lets you run SQL queries across multiple data sources, which can drive various business intelligence and analytics processes. You can use JDBC to connect Athena with BI and machine learning tools.

Image source – https://www.sqlshack.com/an-introduction-to-aws-athena/

Limitations of Athena

- The optimization is limited to queries: You can optimize your questions, not your data. However, your data is already stored in Amazon S3; performing transformations to use Athena may affect other users using the exact information for other purposes.

- Multi-tenancy means pooled resources: All Athena users receive a similar SLA for queries at any time. In other words, the entire global user base is “competing” for the same resources – and although AWS provides more as needed, this could mean that query performance fluctuates depending on other people’s usage.

- No indexing: Indexes are integrated into traditional databases but do not exist in Athena. This makes joining large tables a demanding operation that increases the load on Athena and negatively impacts performance. For example, running a query by key requires scanning all the data and searching for the desired key in the result list. This is solved using Upsolver lookup tables.

- Partitioning: Efficient queries in Athena require partitioning of the data. Maintaining the number of partitions in the park that meet your performance needs is essential. Every 500 divisions scanned will add 1 second to your query.

Other Products Required with Athena

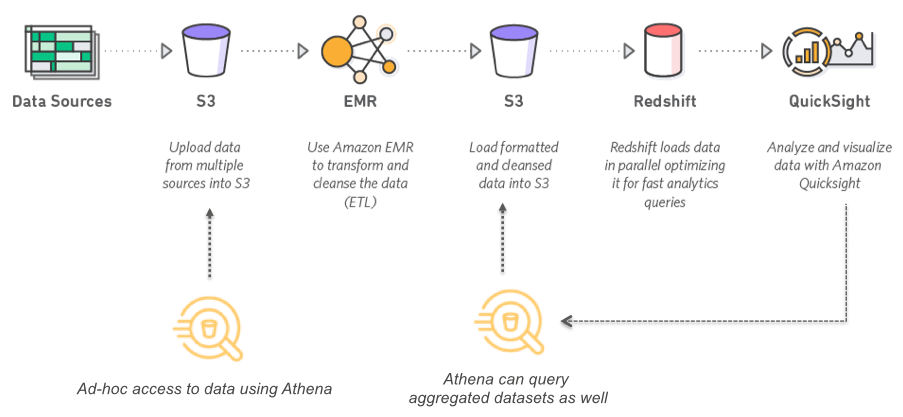

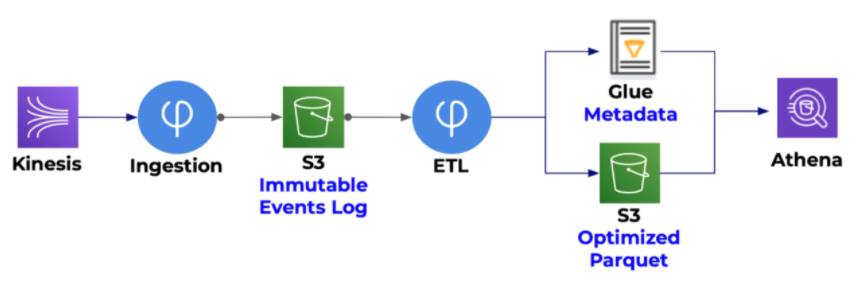

- Amazon S3: Athena queries run directly on Amazon S3, so this is where your data will be stored.

- Glue Data Catalog: A centralized managed schema that allows you to replace or augment Athena with other services as needed (for example, with Amazon Redshift Spectrum).

- ETL Tools: While Athena can run almost any query out of the box, reducing costs and improving performance requires following a set of performance tuning best practices. The traditional way is to use Spark, which can process large volumes of unstructured data; however, this option requires considerable coding knowledge. Some solutions offer managed Spark as a service that simplifies the infrastructure aspects but doesn’t remove the coding overhead.

Use Case

Conclusion

Athena is a service offered by Amazon that is an interactive query service. Athena makes it easy for the user to directly analyze data in Amazon S3 (Simple Storage Service) using standard SQL. For example, in the Amazon Management Console, it can be set to point to where data is stored in Amazon S3 with a few clicks of a button. SQL can then be used to run ad-hoc queries, bringing the result to the user in seconds.

- It does not store data. Instead, storage is managed entirely on Amazon S3. The Athena query service is fully managed, so resources are automatically allocated by AWS as needed to execute a query.

- Because your data is stored in an S3 bucket and the schema is defined in the Glue Data Catalog, you can switch between query engines that can read from these sources without redefining the schema or creating a separate copy of the data.

- Indexes are integrated into traditional databases but do not exist in Athena. This makes joining large tables a demanding operation that increases its load and negatively impacts performance. For example, running a query by key requires scanning all the data and searching for the desired key in the result list. This is solved using Upsolver lookup tables.

The media shown in this article is not owned by Analytics Vidhya and is used at the Author’s discretion.