Introduction

I’ve always wondered how big companies like Google process their information or how companies like Netflix can perform searches in concise times. That’s why I want to tell you about my experience with two powerful tools they use: Apache Hive and Elasticsearch.

.png)

What are Apache Hive and Elasticsearch?

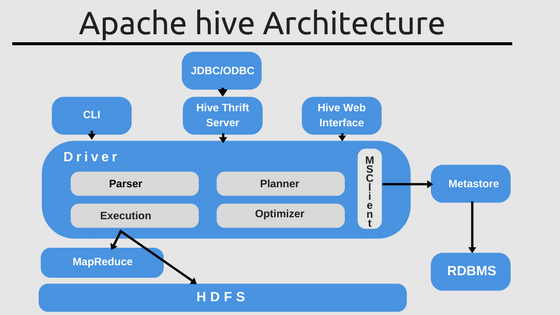

Apache Hive is a data warehouse technology and part of the Hadoop ecosystem, closely related to Big Data, which allows you to query and analyze large amounts of data within HDFS (Hadoop Distributed File System). It has its query language called HQL (Hive Query Language), a variant of SQL, except that it converts these queries to the MapReduce framework.

On the other hand, Elasticsearch is a RESTful search engine based on Lucene, a high-performance text search library that is in turn, based on inverted indexes. Elasticsearch enables fast searches because it searches by index instead of directly searching for text. An analogy might be searching a book chapter by reading the index instead of reading page by page until you find what you’re looking for.

We’re getting started!

Please be patient; you should know a few things to understand some of the examples later. The basis of Apache Hive is the MapReduce framework, which combines the Java functions map() and reduction().

Source: medium.com

The map() function receives a key and a value as parameters and then returns a list of values for each function call. After the map() function, the reduce() function is used. It works in parallel for each group created by the map() function. Similarly, with the appropriate key and value, the list is passed to the function, and these are merged to form a smaller list.

Basic concepts of Elasticsearch

Here are some basic concepts of Elasticsearch,

- A document is a minimal unit of information with a key and a value (mmm.. where have I seen that before?)

- An index to sort this information and group it by similar characteristics (for example, a list of people with first name, last name, and age)

- Nodes are computers where Elasticsearch performs searches and stores information.

- A cluster is a collection of nodes.

- We could divide the index into parts, and these parts of the index are called shards.

- And last but not least, like backing up information, replicas are copies of shards.

Apache Hive and Elasticsearch Usage Examples

To further clarify these terms, here are a few examples to give you some context.

Managing an external database using Apache Hive

Let’s start with Apache Hive.

In the example below, you can see how to use an external table for which Hive does not manage storage to import data from files into Hive. Pretty familiar, right? From the start, you can see its great similarity with SQL.

CREATE EXTERNAL TABLE accumulate_ck_2(string key, string value)

STORED 'org.apache.Hadoop.hive.accumulate.AccumuloStorageHandler'

WITH SERDE PROPERTIES (

"accumulo. table.name" = "accumulo_custom",

"accumulo.columns.mapping" = ":rowid,cf:string");

STORED BY is only used as an indicator to handle external storage, and SERDEPROPERTIES is a Hive function that passes information as to a specific SerDe interface.

Index Partitioning using Elasticsearch

For the Elasticsearch example, the PUT statement in the clause is used to index the JSON document and make it searchable. By default, when you mount a node in Elasticsearch, there is only one shard and one replica per index, but in the example below, we change the number of shards in the settings from 1 to 2 with one replica per index.

We do this to be prepared for a large amount of information to index, and we need to delegate the work into smaller parts to make searching more efficient and faster. Divide and conquer, they say.

curl -XPUT http://localhost:9200/another_user?pretty -H 'Content-Type: application/json' -d '

{

"Settings": {

"index.number_of_shards": 2,

"index.number_of_replicas": 1

}

}'

My Experience with the Environment

Installing Apache Hive

Regarding the Hive environment installation, it is necessary to install Hadoop before Hive, as it is mainly aimed at Linux operating systems. From personal experience as a Windows user, I would venture to say that it was particularly difficult.

I first tried to do it on Windows, using Apache Derby to build its metastore and Cygwin, the Linux command execution tool, but it didn’t work as I thought. The little information I found on the internet about Hadoop and Hive with Windows was a big obstacle to continuing working on it. In the end, I had to find an alternative. I’m not saying it’s impossible, but it just got harder and harder for me and took me a long time.

My second option, which proved to be successful, was to install an Ubuntu virtual machine. Just run some commands carefully and it was ready to go. There are four important XML files in the Hadoop folder where you need to add some tags to make it work and that would be it. I would say that Hive is one of those platforms where it takes more time to install than to get the code working.

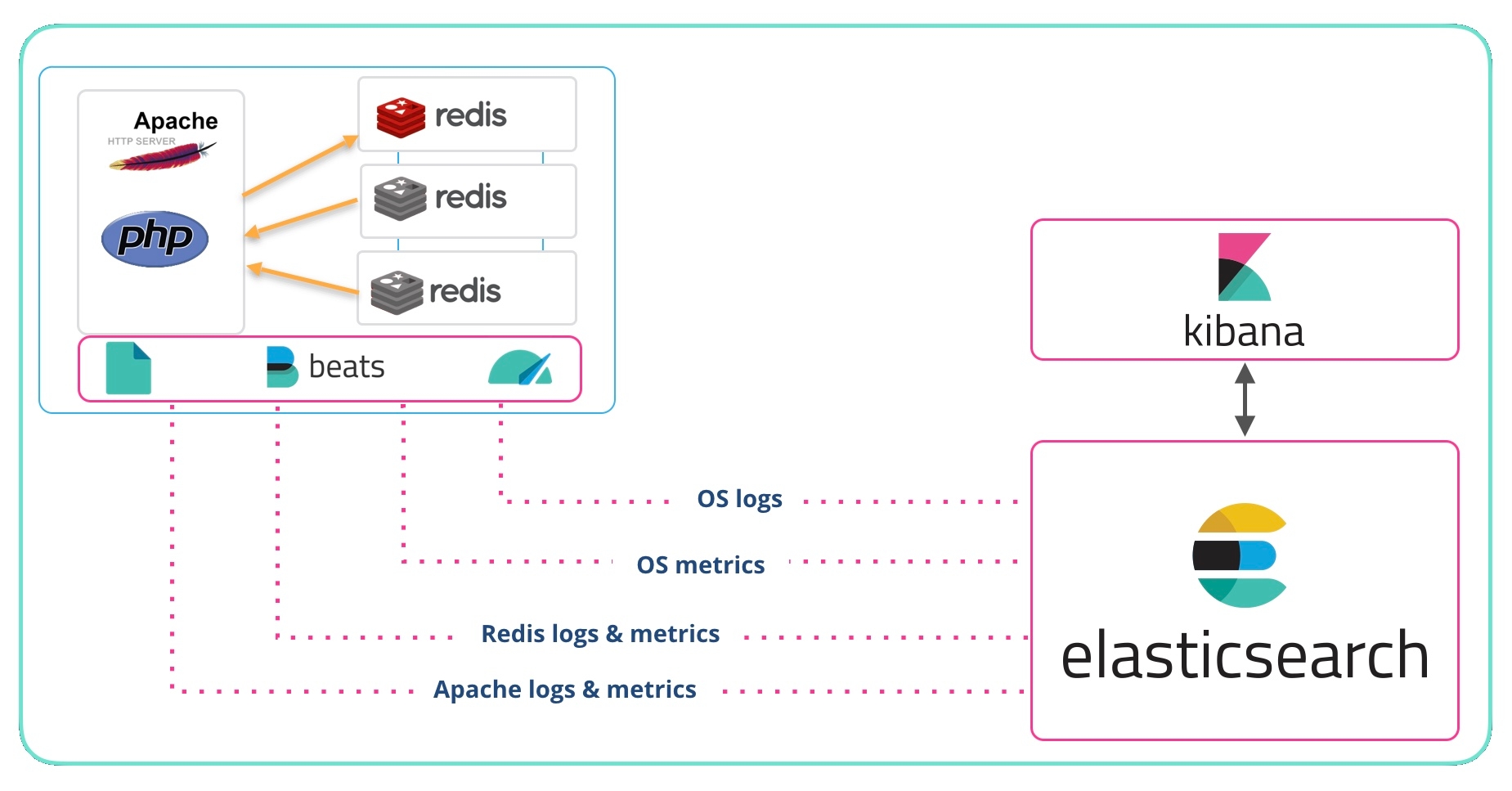

Installing Elasticsearch

Installing Elastic was much easier than Hive. And not only with Elasticsearch but also for Kibana and Cerebro, which are part of the Elasticsearch stack. Kibana allows you to search indexed data and visualize data in elastic search. I put some commands in the Kibana console, which automatically adjusts Elasticsearch information, and Cerebro, a tool that makes the actions more visually.

I had to download some zips, extract them, run some files, and write the appropriate ports (9200 for elasticsearch, 9000 for kibana, and 5601 for Cerebro) in my browser, and voilà! I could already do my first tests. This is what Cerebro looks like. You can see a node (the computer that is being used), 6 indexes, 9 scraps, and 51 documents.

Source: https://www.elastic.co

In the previous image, the yellow line is not a decorative tool. This line indicates the configuration status of Elasticsearch. A traffic light shows whether we are doing things right or not.

For example, the green color means that everything is fine and that all shards and their replicas have been assigned to their respective cluster nodes. A yellow line warns that one or more replicas cannot be assigned to any node, and a red line indicates that one or more primary fragments have not been assigned to any node.

Difference between Hadoop and Elasticsearch

As I mentioned earlier, Hadoop is the ecosystem in which Hive is mounted. Both functionalities will likely be confusing because they are similar to a certain extent when dealing with large amounts of data.

1. Hadoop works with parallel data processing using its distributed file system, while Elasticsearch is only a search engine.

2. Hadoop is based on the MapReduce framework, which is relatively more complex to understand and implement than Elasticsearch, which is JSON-based and thus a domain-specific language.

3. Hadoop is for batch processing, while Elasticsearch produces real-time queries and results.

4. Hadoop cluster setup is smoother than the Elasticsearch cluster setup, which is more error-prone.

5. Hadoop does not have the advanced search and analytics capabilities of Elasticsearch.

It can therefore be inferred that the strengths of the other are part of the shortcomings of one. And that’s why there’s now something called Elasticsearch-Hadoop that combines the best of both. Lots of Hadoop storage and powerful data processing with Elasticsearch analytics and real-time search.

Only with tools like Hive was it possible for large companies like Facebook or Netflix to start processing large amounts of information. Facebook alone has 2,449 million active users worldwide (Can you believe it?).

Even Amazon now has a fork of Apache Hive included in Amazon Elastic MapReduce to execute Amazon Web Services and build and monitor applications. Elasticsearch’s user list includes companies like GitHub, Mozilla, and Etsy, to name a few.

Conclusion

Now you know how to determine which of these technologies meet your expectations and achieve your purpose. While one is used for storing a large amount of data, the other is used for efficient and fast searching.

- We cannot ignore the fact that the use of Big Data by companies is becoming more and more important, and it is important to be able to manage all this information. Hive and Elasticsearch are just some of the tools that some companies use; let’s take advantage of the fact that they are open-source.

- In the Elasticsearch example, the PUT statement in the clause is used to index the JSON document and make it searchable. By default, when you mount a node in Elasticsearch, there is only one shard and one replica per index, but in the example below, we change the number of shards in the settings from 1 to 2 with one replica per index.

-

Even Amazon now has a fork of Apache Hive included in Amazon Elastic MapReduce to execute Amazon Web Services and build and monitor applications. Elasticsearch’s user list includes companies like GitHub, Mozilla, and Etsy, to name a few.

The media shown in this article is not owned by Analytics Vidhya and is used at the Author’s discretion.

very informative article.