Humans can identify new objects with fewer examples. However, machines would require thousands of samples to identify the objects. Learning from a limited sample would be challenging in machine learning. Despite these challenges, recent advances in machine learning have introduced new concepts such as zero shot learning, which aim to identify data with smaller samples or even zero samples. In zero-shot learning, computers learn to recognize new objects by understanding similarities between known and unknown categories, making educated guesses based on learned attributes. This approach enables machines to adapt and recognize new objects with limited or no prior examples, akin to human intuition. In this article you will, get to know all about the zero shot learning , how it works, its differnce and Importance.

This article was published as a part of the Data Science Blogathon.

Table of contents

- Understanding about the Zero Shot Learning

- How Does Zero Shot Learning Works?

- Few Shot Learning

- Importance of Few Shot Learning

- Applications of Few-Shot Learning

- One Shot Learning

- Importance and Applications of One Shot Learning

- Importance and Applications of One Shot Learning

- Difference between Few Shot, One Shot, and Zero Shot Learning

- Frequently Asked Questions

Understanding about the Zero Shot Learning

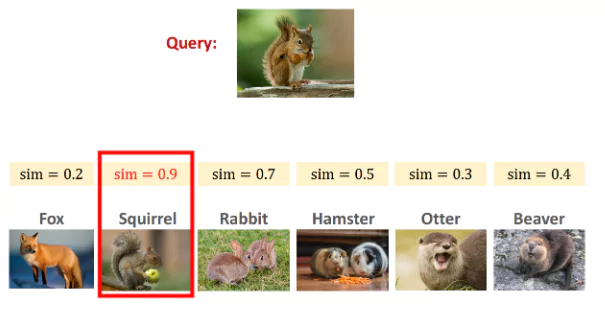

Zero-shot learning is a machine learning pattern where a pre-trained deep learning model is made to generalize on a category of samples. The idea behind Zero-shot learning is how humans can naturally find similarities between data classes, in the same way, training the machine to identify.

- Main Aim of Zero-Shot Learning:

- Predict results without any training samples.

- Recognize objects from classes not included in the training data.

- Basis of Zero-Shot Learning:

- Relies on knowledge transfer from instances provided during training.

- Proposed Approach:

- Learn intermediate semantic layers and properties during training.

- Apply this knowledge to predict new, unseen classes of data.

For example, let’s say we have seen a horse but never seen a zebra. If someone tells you that a zebra looks like a horse but has black and white stripes, you will probably recognize a zebra when you see it.

How Does Zero Shot Learning Works?

Here is how Zero Shot Learning Works:

- Understanding Features: Instead of just learning from examples, the computer learns about the important features or traits that describe different things. For example, if it’s learning about animals, it might learn that cats have fur, whiskers, and sharp claws.

- Generalization: Once it understands these features, it can use them to recognize new things it hasn’t seen before. For instance, if it knows that animals with fur and whiskers are usually cats, it can guess that a new animal with those features is likely a cat.

- Using Clues: Sometimes, the computer gets additional information to help it understand new things. This might be descriptions or labels that tell it about the features of different categories.

- Testing: Finally, we test the computer to see how well it can recognize new things. We give it images or descriptions of things it wasn’t trained on, and see if it can correctly identify them based on what it’s learned about their features.

Overall, zero-shot learning is like teaching a computer to understand the essence of things, so it can make educated guesses about new things it encounters. It’s useful when we can’t show the computer every possible example during training, and we want it to be able to learn and adapt to new situations on its own.

Checkout this article about the Techniques for Deep Learning

Few Shot Learning

Few-shot learning refers to feeding models with very minimal data, contrary to the practice of feeding large amounts of data. Few shot learning is the best example of a meta-learning shot where it is trained on several related tasks during the meta-training phase, so it can generalize well on unseen data with very few examples.

Importance of Few Shot Learning

- Reducing the data collection, since Few-shot learning requires fewer data to train the model, which in turn reduces cost in data collection and computational cost.

- When there is insufficient data for supervised or unsupervised machine learning tools, often find it challenging to make predictions; in such cases, few-shot learning is very helpful in doing.

- Humans can easily categorize different handwritten characters after seeing a few examples; however, for the machine to identify these

handwritten characters, it needs lots of data to train. Few-shot learning is a test base where computers are expected to learn from a few examples like humans. - Machines can learn rare diseases by using few-shot learning. They use computer vision models to classify the anomalies with few-shot

learning by using a very small amount of data.

Applications of Few-Shot Learning

- Computer vision – Character Recognition, Image Classification, and Other Image applications like (Image retrieval, gesture recognition) and Video applications.

- Natural Language Processing: Parsing, Translation, Sentiment classification from short reviews, User Intent classification, Text

classification, Sentiment analysis. - Robotics: Visual Navigation, Continuous Control, Learning manipulation actions from a few demonstrations.

- Audio Processing: Voice conversion across different languages, Voice conversion from one user to another.

- Other: Medical Applications, IoT applications, Mathematical applications, Material Science applications.

One Shot Learning

One Shot learning is a machine learning algorithm that requires very little database to identify or access the similarities between the objects. These are more helpful in deep learning models. In one-shot learning for machine learning algorithms, only one instance or doesn’t require many examples for each category to feed to the model for training, the best examples for One-shot learning are computer vision images and facial recognition.

Importance and Applications of One Shot Learning

- The goal of one-shot learning is to identify and recognize the features of an object, like how humans can remember and train the system to use prior knowledge to classify new objects

- One-shot learning is good at identifying computer vision images and facial recognition and passport identification checks where individuals should be accurately classified with different appearances

- One of the approaches of One Shot learning is Using Siamese networks

- One-shot learning applications are used in voice cloning, IoT analytics, curve-fitting in mathematics, one-shot drug discovery, and other medical applications.

Importance and Applications of One Shot Learning

1. Data labeling is a labor-intensive job. It can be used when training data is lacking for a specific class.

2. Zero-shot learning can be deployed in scenarios where the model has to learn new tasks without re-learning previously learned ones.

3. To Improve the generalization ability of a machine learning model.

4. Zero shot could be a more effective way of learning new information than traditional methods, such as learning through trial and error

5. In Image classification and Object Detection, Zero shot is additionally helpful in finding the visuals

6. Zero shot is more supportive in developing several deep work frameworks like Image generation, Image Retrieval

Difference between Few Shot, One Shot, and Zero Shot Learning

- When a small amount of data is available, and with the existing small volume of data only, we need to train the model; in such types of

scenarios, Few shot Learning is very helpful. Few Shot learning can be used in image classification, Facial recognition disciplines - On the other hand, shot Learning uses very less data when compared with Few shot learning, or it uses only one instance or example of data, not the vast database. One-shot learning is more useful in identifying the image of a person in any identification proof

- When there is no training data available for the machine learning the algorithm still the system has to identify or classify the object, in such scenarios Zero Shot learning generates the best results

Conclusion

Now that we have a fair understanding of Few shot, One shot, Zero shot Learnings, despite having a few drawbacks with these learning, it generates benefits in making predictions with limited or no data.

The main takeaway is

- We can use these algorithms where less data is available, and it takes less time to collect huge amounts of data to train models.

- For deep learning models, Few shot, One shot, and Zero-shot Learnings are the best options to implement.

- One-shot and Few Shot learning eliminate training data on billions of images to a model.

- These learning are widely used in Classification, Regression, and Image recognition.

- All these techniques are useful in overcoming data scarcity challenges and reducing costs.

Frequently Asked Questions

Zero-Shot Learning: It’s like teaching a computer to guess things it has never seen before. For example, I showed it pictures of cats and dogs and later asked it to identify a giraffe, even though it had never seen one before.

Unsupervised Learning: A computer tries to find patterns in information without being told what to look for specifically. It’s like letting the computer explore and discover things on its own.

Zero-shot learning is a type of machine learning where a model learns to recognize classes it has never seen during training. This means it can identify objects or concepts it wasn’t specifically taught to recognize.

Zero-Shot Learning: Think of teaching a computer to recognize things it has never seen by giving it a general idea. For instance, training it on various fruits and then asking it to identify a fruit it has never encountered.

One-Shot Learning: This is like teaching a computer about something using only one example. If you show it a single picture of a rare bird, it learns to recognize that bird even though it hasn’t seen many pictures of it.

Zero-shot learning with Language Model (LLM) involves using natural language processing models, like GPT (Generative Pre-trained Transformer), to perform zero-shot learning tasks. These models can understand and generate human-like text, making them useful for tasks like text classification even for classes they haven’t seen before.

An example of zero-shot learning is a computer vision model trained on a dataset containing images of various animals, but it has never seen an image of a specific rare species like a quokka during training. Despite this, if the model has learned