This article was published as a part of the Data Science Blogathon.

Introduction

AWS S3 is one of the object storage services offered by Amazon Web Services or AWS. It allows users to store and retrieve files quickly and securely from anywhere. Users can combine S3 with other services to build numerous scalable applications. Boto is the Python SDK (software development kit) or hand-coded Python library for AWS. Its different versions include Boto2, Boto3, and Botocore. The latest version of the SDK is Boto3 which is the ground-up rewrite of Boto. Through the boto3 python library, users can connect to Amazon services, including S3, and use the resources from within AWS. It helps developers to create, configure, and manage AWS services, making it easy to integrate with Python applications, libraries, or scripts. This article covers how boto3 works and how it helps interact with S3 operations such as creating, listing, and deleting buckets and objects.

What is boto3

Key Features of boto3

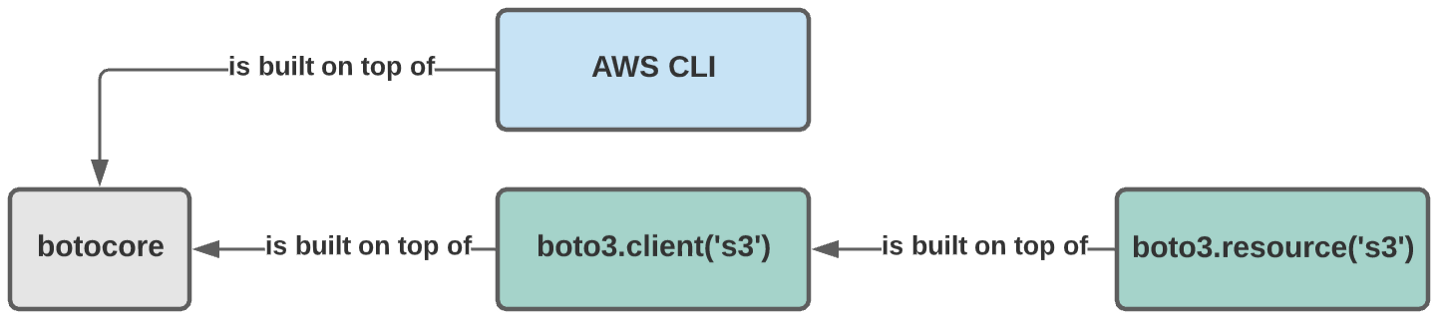

- It is built on top of botocore- a Python library used to send API requests to AWS and receive responses from the service.

- Supports Python 2.7+ and 3.4+ natively.

- Boto3 provides sessions and per-session credentials & configuration, along with essential components like authentication, parameter, and response handling.

- Has a consistent and Up-to-date Interface

Working with AWS S3 and Boto3

Source: https://dashbird.io/blog/boto3-aws-python/

Installation of boto3 and Building AWS S3 Client

pip list

pip install boto3

# Import the necessary packages

import boto3

# Now, build a client

S3 = boto3.client(

's3',

aws_access_key_id = 'enter your_aws_access_key_id ',

aws_secret_access_key = ' enter your_aws_secret_access_key ',

region_name = ' enter your_aws_region_name '

)

AWS S3 Operations With boto3

Creating buckets:

my_bucket = "enter your s3 bucket name that has to be created" bucket = s3.create_bucket( ACL='private', Bucket= my_bucket )

bucket_response = s3.list_buckets()

# Output the bucket names

print('Existing buckets are:')

for bucket in bucket_response ['Buckets']:

print(f' {bucket["Name"]}')

my_bucket = "enter your s3 bucket name that has to be deleted"

response = s3.delete_bucket(Bucket= my_bucket)

print("Bucket has been deleted successfully !!!")

my_bucket = "enter your s3 bucket name from which objects or files has to be listed out"

response = s3.list_objects(Bucket= my_bucket,

MaxKeys=10,

Preffix="only_files_starting_with_this_string")

s3 = boto3.client("s3")

my_bucket = " enter your s3 bucket name from which objects or files has to be listed out "

response = s3.list_objects_v2(Bucket=my_bucket)

files = response.get("Contents")

for file in files:

print(f"file_name: {file['Key']}, size: {file['Size']}")

- File: it defines the path of the file to be uploaded

- Key: it represents the unique identifier for an object within a bucket

- Bucket: bucket name to which file has to be uploaded

my_bucket = "enter your bucket name to which files has to be uploaded" file_name = "enter your file path name to be uploaded" key_name = "enter unique identifier" s3.upload_file(Filename= file_name, Bucket= my_bucket, Key= key_name)

my_bucket = "enter your s3 bucket name from which object or files has to be downloaded" file_name = "enter file to be downloaded" key_name = "enter unique identifier" s3.download_file(Filename= file_name, Bucket= my_bucket, Key= key_name)

my_bucket = "enter your s3 bucket name from which objects or files has to be deleted" key_name = "enter unique identifier" s3.delete_object(Bucket= my_bucket, Key= key_name)

my_bucket = "enter your s3 bucket name from which objects or file's metadata has to be obtained" key_name = "enter unique identifier" response = s3.head_object(Bucket= my_bucket, Key= key_name)

Conclusion

- AWS S3 is one object storage service that helps store and retrieve files quickly.

- Boto3 is a Python SDK or library that can manage Amazon S3, EC2, Dynamo DB, SQS, Cloudwatch, etc.

- Boto3 clients provide a low-level interface to the AWS services, whereas resources are a higher-level abstraction than clients.

- Using the Boto3 library with Amazon S3 allows users to create, list, delete, and update S3 Buckets, Objects, S3 Bucket Policies, etc., from Python programs or scripts in a faster way.

The media shown in this article is not owned by Analytics Vidhya and is used at the Author’s discretion.