Data engineering plays a pivotal role in the vast data ecosystem by collecting, transforming, and delivering data essential for analytics, reporting, and machine learning. Aspiring data engineers often seek real-world projects to gain hands-on experience and showcase their expertise. This article presents the top 20 data engineering project ideas with their source code. Whether you’re a beginner, an intermediate-level engineer, or an advanced practitioner, these projects offer an excellent opportunity to sharpen your big data and data engineering skills.

In this article, you will discover various data engineering projects that are perfect for beginners. We will explore data engineering projects with source code, providing you with practical examples. Additionally, you’ll learn how to approach a data engineering project step by step, ensuring a comprehensive understanding of the process. By the end, you’ll be equipped with the knowledge to tackle your own data engineering projects for beginners confidently.

Table of Contents

- Data Engineering Projects for Beginners

- Intermediate-level Data Engineer Portfolio Project Examples for 2023

- Data Engineering Projects on GitHub

- Advanced-Data Engineering Projects for Resume

- Azure Data Engineering Projects

- AWS Data Engineering Project Ideas

- Recap of Data Engineering Projects

- Conclusion

- Frequently Asked Questions

Data Engineering Projects for Beginners

1. Smart IoT Infrastructure

Objective

The major goal of this project is to establish a trustworthy data pipeline for collecting and analysing data from IoT (Internet of Things) devices. Webcams, temperature sensors, motion detectors, and other IoT devices all generate a lot of data. You want to design a system to effectively consume, store, process, and analyze this data. By doing this, real-time monitoring and decision-making based on the learnings from the IoT data are made possible.

Tech Stack for this Project

- Ingestion: Apache Kafka, MQTT

- Storage: Apache Cassandra, MongoDB

- Processing: Apache Spark Streaming, Apache Flink

- Visualization: Grafana, Kibana

Click here to check the source code

2. Aviation Data Analysis

Objective

To collect, process, and analyze aviation data from numerous sources, including the Federal Aviation Administration (FAA), airlines, and airports, this project attempts to develop a data pipeline. Aviation data includes flights, airports, weather, and passenger demographics. Your goal is to extract meaningful insights from this data to improve flight scheduling, enhance safety measures, and optimize various aspects of the aviation industry.

Tech Stack Used

- Ingestion: Apache NiFi, AWS Kinesis

- Storage: Amazon Redshift, Google BigQuery

- Processing & Analysis: Python (Pandas, Matplotlib)

- Visualization: Tableau, Power BI

Click here to view the Source Code

3. Shipping and Distribution Demand Forecasting

Objective

In this project, your objective is to create a robust ETL (Extract, Transform, Load) pipeline that processes shipping and distribution data. By using historical data, you will build a demand forecasting system that predicts future product demand in the context of shipping and distribution. This is crucial for optimizing inventory management, reducing operational costs, and ensuring timely deliveries.

Tech Stack Used

- ETL Pipeline: Apache NiFi, Talend

- Data Transformation: Python, Apache Spark

- Forecasting Models: ARIMA, Prophet

- Data Storage: PostgreSQL, MySQL

Click here to view the source code for this data engineering project,

4. Event Data Analysis

Objective

Make a data pipeline that collects information from various events, including conferences, sporting events, concerts, and social gatherings. Real-time data processing, sentiment analysis of social media posts on these events, and the creation of visualizations to show trends and insights in real-time are all part of the project.

Tech Stack Used for this Data Engineering Project

- Data Ingestion: Twitter API, web scraping

- Sentiment Analysis: Python (NLTK, spaCy)

- Real-Time Processing: Apache Kafka, Apache Flink

- Visualization: Dash, Plotly

Click here to check the source code.

Intermediate-level Data Engineer Portfolio Project Examples for 2023

5. Log Analytics Project

Objective

Build a comprehensive log analytics system that collects logs from various sources, including servers, applications, and network devices. The system should centralize log data, detect anomalies, facilitate troubleshooting, and optimize system performance through log-based insights.

Tech Stack Used

- Log Collection: Logstash, Fluentd

- Log Storage and Indexing: Elasticsearch

- Visualization and Dashboards: Kibana

- Alerting: Elasticsearch Watcher, Grafana Alerts

Click here to explore this data engineering project

6. Movielens Data Analysis for Recommendations

Objective

A recommendation engine has been designed and developed using the Movielens dataset. To ensure data quality, a robust Extract, Transform, Load (ETL) pipeline was created to preprocess and clean the dataset. This pipeline helps in handling missing values, removing duplicates, and organizing the data for analysis. Collaborative filtering algorithms were then implemented to offer personalized movie suggestions to users based on their preferences and past interactions with movies. This approach leverages the collective wisdom of users to make recommendations, enhancing the movie-watching experience.

Tech Stack Used

- ETL Pipeline: Apache Spark, AWS Glue

- Collaborative Filtering: Scikit-learn, TensorFlow

- Data Storage: Amazon S3, Hadoop HDFS

- Web Application: Flask, Django

Click here to explore this data engineering project.

7. Retail Analytics Project

Objective

Create a retail analytics platform that ingests data from various sources, including point-of-sale systems, inventory databases, and customer interactions. Analyze sales trends, optimize inventory management, and generate personalized product recommendations for customers.

Tech Stack Used in this Data Engineering Project

- ETL Processes: Apache Beam, AWS Data Pipeline

- Machine Learning Algorithms: XGBoost, Random Forest

- Data Warehousing: Snowflake, Azure Synapse Analytics

- Visualization: Tableau, Looker

Click here to explore the source code.

Data Engineering Projects on GitHub

8. Real-time Data Analytics

Objective

Contribute to an open-source project focused on real-time data analytics. This project provides an opportunity to improve the project’s data processing speed, scalability, and real-time visualization capabilities. You may be tasked with enhancing the performance of data streaming components, optimizing resource usage, or adding new features to support real-time analytics use cases.

Tech Stack Used in this Project

- Technologies: Apache Flink, Spark Streaming, Apache Storm

- Tasks: Optimizing data streaming components for speed, scalability, and resource efficiency

- Methods: Fine-tuning processing algorithms, enhancing parallelism, introducing caching mechanisms

Click here to explore the source code for this data engineering project.

9. Real-time Data Analytics with Azure Stream Services

Objective

Explore Azure Stream Analytics by contributing to or creating a real-time data processing project on Azure. This may involve integrating Azure services like Azure Functions and Power BI to gain insights and visualize real-time data. You can focus on enhancing the real-time analytics capabilities and making the project more user-friendly.

Tech Stack Used in this Project

- Objectives: Enhance real-time analytics capabilities on Azure using Azure Stream Analytics, Azure Functions, and Power BI.

- Setup: Create Azure Stream Analytics environment, configure inputs/outputs, integrate Azure Functions and Power BI.

- Data Processing: Ingest real-time data, apply SQL-like transformations, implement custom logic with Azure Functions.

- Visualization: Use Power BI for real-time data visualization, focusing on user-friendly design and intuitive insights.

Click here to explore the source code for this data engineering project.

10. Real-time Financial Market Data Pipeline with Finnhub API and Kafka

Objective

Build a data pipeline that collects and processes real-time financial stock market data using the Finnhub API and Apache Kafka. This project involves analyzing stock prices, performing sentiment analysis on news data, and visualizing real-time market trends. Contributions can include optimizing data ingestion, enhancing data analysis for data analyst, or improving the visualization components.

Tech Stack Used in this Project

- Goals: Build a data pipeline for real-time financial stock market data using Apache Kafka and the Finnhub API.

- Pipeline Setup: Utilize Apache Kafka for data ingestion and processing from the Finnhub API.

- Data Analysis: Perform stock price analysis and sentiment analysis on news data.

- Visualization: Visualize real-time market trends and optimize components for data ingestion, analysis, and visualization.

Click here to explore the source code for this project.

11. Real-time Music Application Data Processing Pipeline

Objective

Collaborate on a real-time music streaming data project focused on processing and analyzing user behavior data in real time. You’ll explore user preferences, track popularity, and enhance the music recommendation system. Contributions may include improving data processing efficiency, implementing advanced recommendation algorithms, or developing real-time dashboards.

Tech Stack Used in this Data Engineering Project

- Project Goals: Collaborate on a real-time music streaming data project focusing on user behavior analysis and music recommendation enhancement.

- Data Processing: Implement real-time processing to explore user preferences, track popularity, and refine recommendations.

- Efficiency Improvements: Identify and implement optimizations in the data processing pipeline.

- Recommendation Algorithms: Develop and integrate advanced algorithms to enhance music recommendations.

- Real-time Dashboards: Create dashboards for monitoring and visualizing user behavior data, with continuous enhancements.

Click here to explore the source code.

Advanced-Data Engineering Projects for Resume

12. Website Monitoring

Objective

Develop a comprehensive website monitoring system that tracks performance, uptime, and user experience. This project involves utilizing tools like Selenium for web scraping to collect data from websites and creating alerting mechanisms for real-time notifications when performance issues are detected.

Tech Stack Used

- Objectives: Develop a website monitoring system to track performance, uptime, and user experience.

- Tools Used: Selenium for web scraping to collect website data.

- Alerting Mechanisms: Implement real-time alerts for performance issues and downtime detection.

- System Features: Track website performance metrics comprehensively.

- Maintenance: Plan for ongoing optimization and maintenance to ensure system effectiveness.

Click here to explore the source code of this data engineering project.

13. Bitcoin Mining

Objective

Dive into the cryptocurrency world by creating a Bitcoin mining data pipeline. Analyze transaction patterns, explore the blockchain network, and gain insights into the Bitcoin ecosystem. This project will require data collection from blockchain APIs, analysis, and visualization.

Tech Stack Used in this Data Engineering Project

- Objectives: Create a Bitcoin mining data pipeline for transaction analysis and blockchain exploration.

- Data Collection: Implement mechanisms to collect mining-related data from blockchain APIs.

- Blockchain Analysis: Explore transaction patterns and gain insights into the Bitcoin ecosystem.

- Data Visualization: Develop components for visualizing Bitcoin network insights.

- Comprehensive Data Pipeline: Integrate data collection, analysis, and visualization for a holistic view of Bitcoin mining activities.

Click here to explore the source code for this data engineering project.

14. GCP Project to Explore Cloud Functions

Objective

Explore Google Cloud Platform (GCP) by designing and implementing a data engineering project that leverages GCP services like Cloud Functions, BigQuery, and Dataflow. This project can include data processing, transformation, and visualization tasks, focusing on optimizing resource usage and improving data engineering workflows.

Tech Stack for this Project

- GCP Services: Integrate Cloud Functions, BigQuery, and Dataflow for efficient data processing, transformation, and visualization.

- Execution: Perform data processing and transformation tasks aligned with project goals.

- Resource Optimization: Optimize resource usage within the GCP environment to improve efficiency.

- Workflow Improvement: Continuously enhance data engineering workflows for streamlined and effective processes.

Click here to explore the source code for this project.

15. Visualizing Reddit Data

Objective

Collect and analyze data from Reddit, one of the most popular social media platforms. Create interactive visualizations and gain insights into user behavior, trending topics, and sentiment analysis on the platform. This project will require web scraping, data analysis, and creative data visualization techniques.

Tech Stack Used

- Data Collection: Use web scraping to gather data from Reddit.

- Analysis: Explore user behavior, identify trends, and conduct sentiment analysis.

- Visualization: Create interactive and innovative visualizations to convey insights effectively.

- Presentation: Employ creative techniques for clear and engaging data presentation.

Click here to explore the source code for this project.

Azure Data Engineering Projects

16. Yelp Data Analysis

Objective

The objective of this project is to analyze Yelp data by building a data pipeline to extract, transform, and load the information. The analysis includes identifying popular businesses, conducting sentiment analysis on user reviews, and providing actionable insights to local businesses for improving their services based on the data.

Tech Stack Used in this Data Engineering Project

- Data Extraction: Web scraping techniques or Yelp API.

- Data Cleaning and Preprocessing: Python (Pandas, NumPy) or Azure Data Factory.

- Data Storage: Azure Blob Storage or Azure SQL Data Warehouse.

- Data Analysis: Python libraries like Pandas and Matplotlib.

Click here to explore the source code for this project.

17. Data Governance

Objective

Data governance is critical for ensuring data quality, compliance, and security. In this project, you will design and implement a data governance framework using Azure services. This may involve defining data policies, creating data catalogs, and setting up data access controls to ensure data is used responsibly and in accordance with regulations.

Tech Stack Used

- Data Catalog and Classification: Azure Purview.

- Data Policy Implementation: Azure Policy and Azure Blueprints.

- Access Control: Role-Based Access Control (RBAC) and Azure Active Directory integration.

Click here to explore the source code for this data engineering project.

18. Real-time Data Ingestion

Objective

Design a real-time data ingestion pipeline on Azure using services like Azure Data Factory, Azure Stream Analytics, and Azure Event Hubs. The goal is to ingest data from various sources and process it in real time, providing immediate insights for decision-making.

Tech Stack Used in the Data Engineering Project

- Data Ingestion: Azure Event Hubs.

- Real-Time Data Processing: Azure Stream Analytics.

- Data Storage: Azure Data Lake Storage or Azure SQL Database.

- Visualization: Power BI or Azure Dashboards.

Click here to explore the source code for this project.

AWS Data Engineering Project Ideas

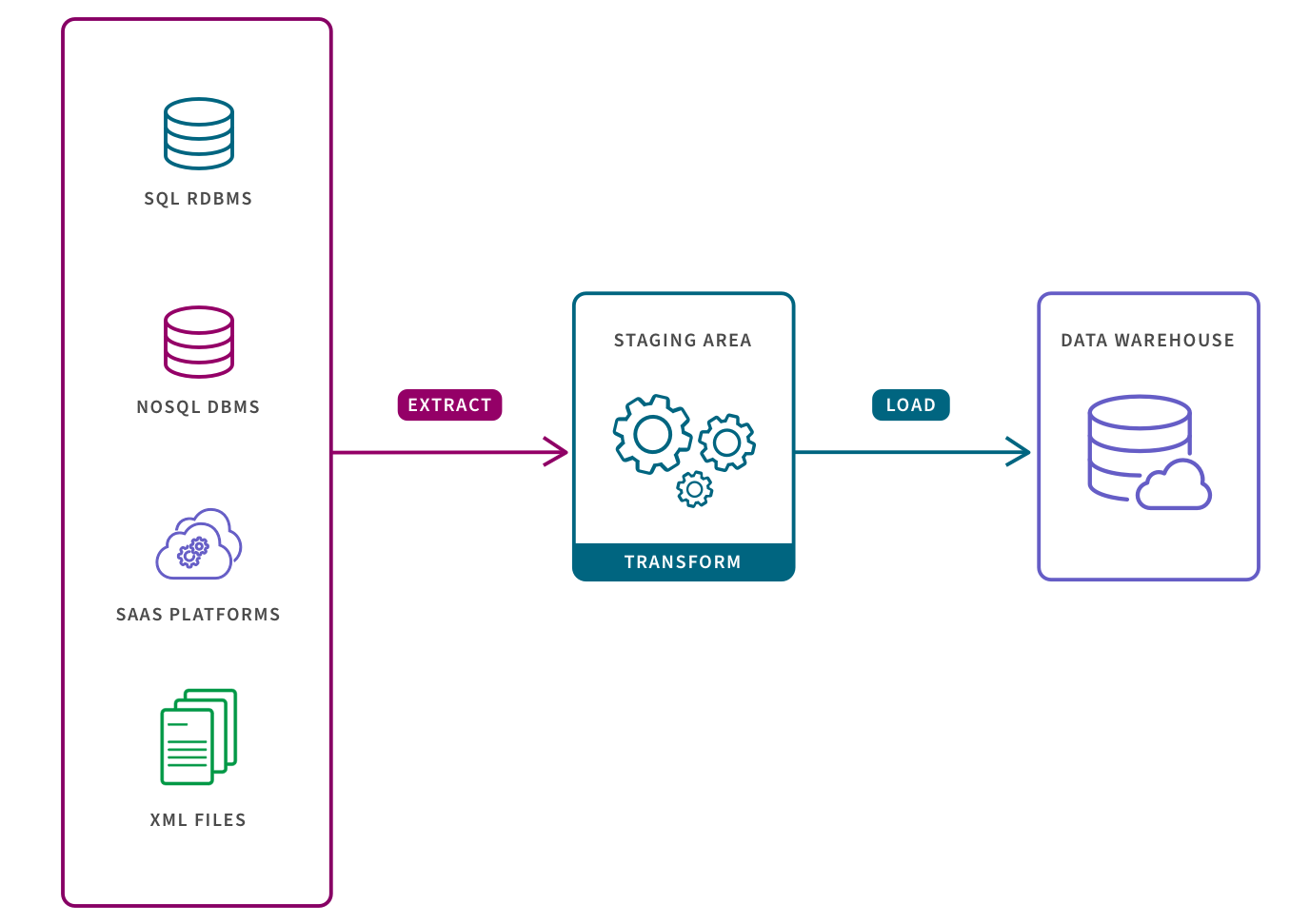

19. ETL Pipeline

Objective

Build an end-to-end ETL (Extract, Transform, Load) pipeline on AWS. The pipeline should extract data from various sources, perform transformations, and load the processed data into a data warehouse or lake. This project is ideal for understanding the core principles of data engineering.

Tech Stack Used

- Data Extraction: AWS Glue or AWS Data Pipeline.

- Data Transformation: Apache Spark on Amazon EMR or AWS Glue.

- Data Storage: Amazon S3 or Amazon Redshift.

- Orchestration: AWS Step Functions or AWS Lambda.

Click here to explore the source code for this project.

20. ETL and ELT Operations

Objective

Explore ETL (Extract, Transform, Load) and ELT (Extract, Load, Transform) data integration approaches on AWS. Compare their strengths and weaknesses in different scenarios. This project will provide insights into when to use each approach based on specific data engineering requirements.

Tech Stack Used

- Data Extraction: AWS Glue or AWS Data Pipeline.

- Data Transformation: AWS Glue for ETL data transformation or AWS DMS (Database Migration Service) for ELT operations.

- Data Storage: Amazon S3, Amazon Redshift, or Amazon Aurora depending on the approach.

- Orchestration: AWS Step Functions or AWS Lambda.

- Strengths Comparison: ETL offers complex transformations; ELT provides fast data loading.

- Weaknesses Note: ETL requires additional storage; ELT may incur higher target system costs if not managed carefully.

Click here to explore the source code for this project.

Recap of Data Engineering Projects

The mentioned projects offer excellent opportunities to enhance your resume. These diverse experiences encompass designing recommendation engines, developing ETL pipelines, and analyzing real-time data with technologies like Apache Flink and Spark Streaming. Additionally, the projects involve comprehensive analysis of Yelp data, including identifying popular businesses and providing insights to improve services. These accomplishments showcase a strong skill set in data engineering, analytics, and software development, making them valuable additions to your resume.

Conclusion

This curated list features 20 data engineering projects, offering hands-on experience across various skill levels. From IoT infrastructure and aviation data analysis to retail analytics and real-time financial market data processing, each project leverages key technologies such as Apache Airflow, Docker, JSON, PostgreSQL, and more. Emphasizing raw data transformation, ETL pipelines, and business intelligence, these projects cater to software engineers seeking practical applications and proficiency in programming languages. The inclusion of technologies like Delta Lake and CSV handling further enhances the versatility of these projects, making them valuable for skill development in the dynamic field of data engineering.

But don’t stop here; if you’re eager to take your data engineering journey to the next level, consider enrolling in our BlackBelt Plus program. With BB+, you’ll gain access to expert guidance, hands-on experience, and a supportive community, propelling your data engineering skills to new heights. Enroll Now!

Frequently Asked Questions

A. Data engineering involves designing, constructing, and maintaining data pipelines, including essential aspects like data modeling. For instance, creating a pipeline to collect, clean, and store customer data for analysis showcases how data engineering incorporates effective data modeling techniques to structure and organize information for meaningful insights.

A. Best practices in data engineering include robust data quality checks, efficient ETL processes, documentation, and scalability for future data growth.

A. Data engineers work on tasks like data pipeline development, ensuring data accuracy, collaborating with data scientists, and troubleshooting data-related issues.

A. To showcase data engineering projects on a resume, highlight key projects, mention technologies used, and quantify the impact on data processing or analytics outcomes.