Introduction

LlamaParse is a document parsing library developed by Llama Index to efficiently and effectively parse documents such as PDFs, PPTs, etc.

Creating RAG applications on top of PDF documents presents a significant challenge many of us face, specifically with the complex task of parsing embedded objects such as tables, figures, etc. The nature of these objects often means that conventional parsing techniques struggle to interpret and extract the information encoded within them accurately.

The software development community has introduced various libraries and frameworks in response to this widespread issue. Examples of these solutions include LLMSherpa and unstructured.io. These tools provide robust and flexible solutions to some of the most persistent issues when parsing complex PDFs.

The latest addition to this list of invaluable tools is LlamaParse. LlamaParse was developed by Llama Index, one of the most well-regarded LLM frameworks currently available. Because of this, LlamaParse can be directly integrated with the Llama Index. This seamless integration represents a significant advantage, as it simplifies the implementation process and ensures a higher level of compatibility between the two tools. In conclusion, LlamaParse is a promising new tool that makes parsing complex PDFs less daunting and more efficient.

Learning Objectives

- Recognize Document Parsing Challenges: Understand the difficulties in parsing complex PDFs with embedded objects.

- Introduction to LlamaParse: Learn what LlamaParse is and its seamless integration with Llama Index.

- Setup and Initialization: Create a LlamaCloud account, obtain an API key, and install the necessary libraries.

- Implementing LlamaParse: Follow the steps to initialize the LLM, load, and parse documents.

- Creating a Vector Index and Querying Data: Learn to create a vector store index, set up a query engine, and extract specific information from parsed documents.

This article was published as a part of the Data Science Blogathon.

Table of contents

Steps to create a RAG application on top of PDF using LlamaParse

Step 1: Get the API key

LlamaParse is a part of LlamaCloud platform, hence you need to have a LlamaCloud account to get an api key.

First, you must create an account on LlamaCloud and log in to create an API key.

Step 2: Install the required libraries

Now open your Jupyter Notebook/Colab and install the required libraries. Here, we only need to install two libraries: llama-index and llama-parse. We will be using OpenAI’s model for querying and embedding.

!pip install llama-index

!pip install llama-parseStep 3: Set the environment variables

import os

os.environ['OPENAI_API_KEY'] = 'sk-proj-****'

os.environ["LLAMA_CLOUD_API_KEY"] = 'llx-****'Step 4: Initialize the LLM and embedding model

Here, I am using gpt-3.5-turbo-0125 as the LLM and OpenAI’s text-embedding-3-small as the embedding model. We will use the Settings module to replace the default LLM and the embedding model.

from llama_index.llms.openai import OpenAI

from llama_index.embeddings.openai import OpenAIEmbedding

from llama_index.core import Settings

embed_model = OpenAIEmbedding(model="text-embedding-3-small")

llm = OpenAI(model="gpt-3.5-turbo-0125")

Settings.llm = llm

Settings.embed_model = embed_modelStep 5: Parse the Document

Now, we will load our document and convert it to the markdown type. It is then parsed using MarkdownElementNodeParser.

The table I used is taken from ncrb.gov.in and can be found here: https://ncrb.gov.in/accidental-deaths-suicides-in-india-adsi. It has data embedded at different levels.

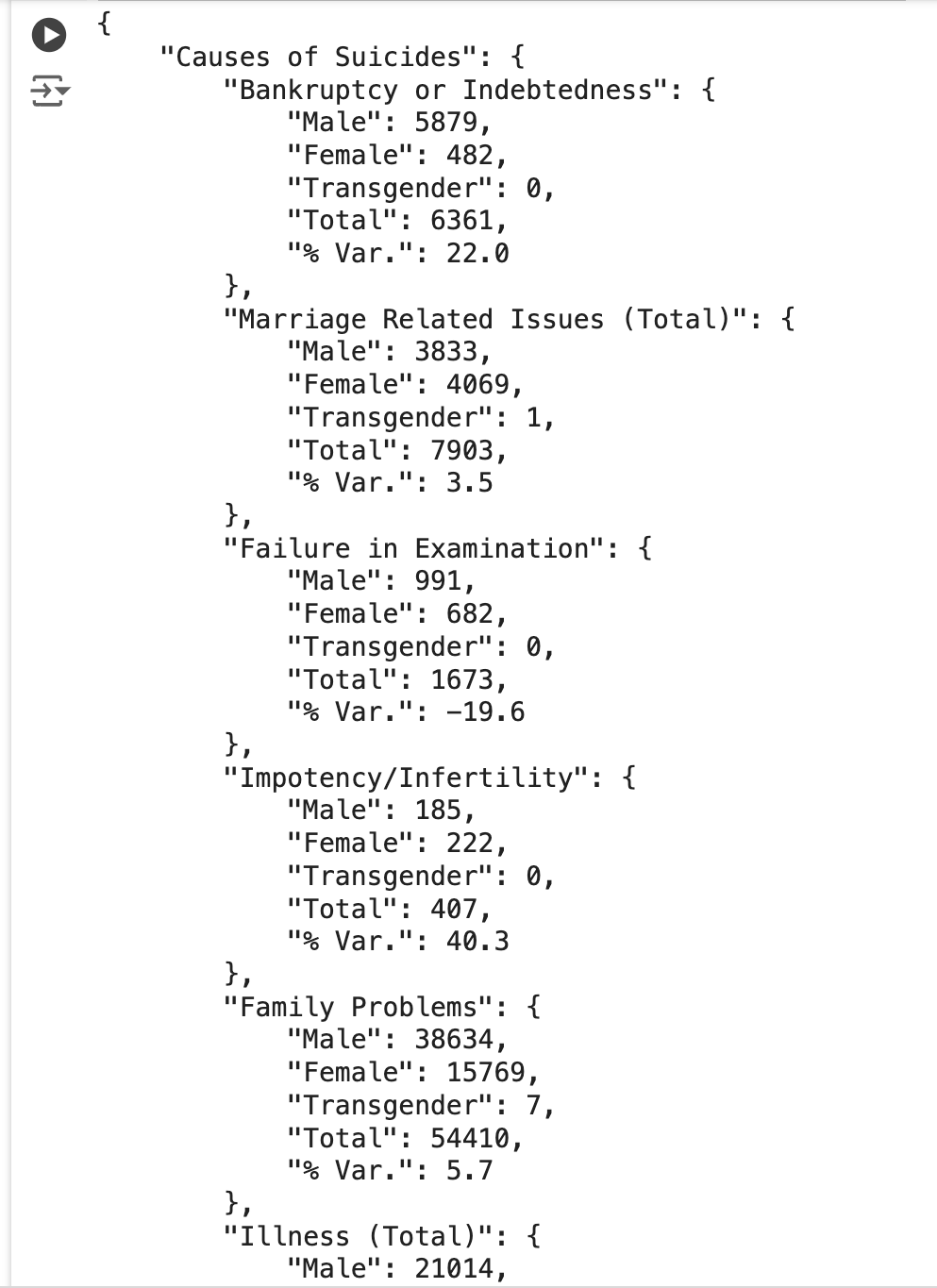

Below is the snapshot of the table that i am trying to parse.

from llama_parse import LlamaParse

from llama_index.core.node_parser import MarkdownElementNodeParser

documents = LlamaParse(result_type="markdown").load_data("./Table_2021.pdf")

node_parser = MarkdownElementNodeParser(

llm=llm, num_workers=8

)

nodes = node_parser.get_nodes_from_documents(documents)

base_nodes, objects = node_parser.get_nodes_and_objects(nodes)Step 6: Create the vector index and query engine

Now, we will create a vector store index using the llama index’s built-in implementation to create a query engine on top of it. We can also use vector stores such as chromadb, pinecone for this.

from llama_index.core import VectorStoreIndex

recursive_index = VectorStoreIndex(nodes=base_nodes + objects)

recursive_query_engine = recursive_index.as_query_engine(

similarity_top_k=5

)Step 7: Querying the Index

query = 'Extract the table as a dict and exclude any information about 2020. Also include % var'

response = recursive_query_engine.query(query)

print(response)The above user query will query the underlying vector index and return the embedded contents in the PDF document in JSON format, as shown in the image below.

As you can see in the screenshot, the table was extracted in a clean JSON format.

Step 8: Putting it all together

from llama_index.llms.openai import OpenAI

from llama_index.embeddings.openai import OpenAIEmbedding

from llama_index.core import Settings

from llama_parse import LlamaParse

from llama_index.core.node_parser import MarkdownElementNodeParser

from llama_index.core import VectorStoreIndex

embed_model = OpenAIEmbedding(model="text-embedding-3-small")

llm = OpenAI(model="gpt-3.5-turbo-0125")

Settings.llm = llm

Settings.embed_model = embed_model

documents = LlamaParse(result_type="markdown").load_data("./Table_2021.pdf")

node_parser = MarkdownElementNodeParser(

llm=llm, num_workers=8

)

nodes = node_parser.get_nodes_from_documents(documents)

base_nodes, objects = node_parser.get_nodes_and_objects(nodes)

recursive_index = VectorStoreIndex(nodes=base_nodes + objects)

recursive_query_engine = recursive_index.as_query_engine(

similarity_top_k=5

)

query = 'Extract the table as a dict and exclude any information about 2020. Also include % var'

response = recursive_query_engine.query(query)

print(response)Conclusion

LlamaParse is an efficient tool for extracting complex objects from various document types, such as PDF files with few lines of code. However, it is important to note that a certain level of expertise in working with LLM frameworks, such as the llama index, is required to utilize this tool fully.

LlamaParse proves valuable in handling tasks of varying complexity. However, like any other tool in the tech field, it is not entirely immune to errors. Therefore, performing a thorough application evaluation is highly recommended independently or leveraging available evaluation tools. Evaluation libraries, such as Ragas, Truera, etc., provide metrics to assess the accuracy and reliability of your results. This step ensures potential issues are identified and resolved before the application is pushed to a production environment.

Key Takeaways

- LlamaParse is a tool created by the Llama Index team. It extracts complex embedded objects from documents like PDFs with just a few lines of code.

- LlamaParse offers both free and paid plans. The free plan allows you to parse up to 1000 pages per day.

- LlamaParse currently supports 10+ file types (.pdf, .pptx, .docx, .html, .xml, and more).

- LlamaParse is part of the LlamaCloud platform, so you need a LlamaCloud account to get an API key.

- With LlamaParse, you can provide instructions in natural language to format the output. It even supports image extraction.

The media shown in this article are not owned by Analytics Vidhya and is used at the Author’s discretion.

Frequently asked questions(FAQ)

A. LlamaIndex is the leading LLM framework, along with LangChain, for building LLM applications. It helps connect custom data sources to large language models (LLMs) and is a widely used tool for building RAG applications.

A. LlamaParse is an offering from Llama Index that can extract complex tables and figures from documents like PDF, PPT, etc. Because of this, LlamaParse can be directly integrated with the Llama Index, allowing us to use it along with the wide variety of agents and tools that the Llama Index offers.

A. Llama Index is an LLM framework for building custom LLM applications and provides various tools and agents. LlamaParse is specially focused on extracting complex embedded objects from documents like PDF, PPT, etc.

A. The importance of LlamaParse lies in its ability to convert complex unstructured data into tables, images, etc., into a structured format, which is crucial in the modern world where most valuable information is available in unstructured form. This transformation is essential for analytics purposes. For instance, studying a company’s financials from its SEC filings, which can span around 100-200 pages, would be challenging without such a tool. LlamaParse provides an efficient way to handle and structure this vast amount of unstructured data, making it more accessible and useful for analysis.

A. Yes, LLMSherpa and unstructured.io are the alternatives available to LlamaParse.

Does it supports on Premise parsing ,if we cant send data to cloud ?