Have you ever wondered how to build a multilingual application that can effortlessly translate text from English to other languages? Imagine creating your very own translation tool, leveraging the power of LangChain to handle the heavy lifting. In this article, we will learn how to build a basic application using LangChain to translate text from English to another language. Even though it’s a simple example, it provides a foundational understanding of some key LangChain concepts and workflows. Let’s build an LLM Application with LCEL.

Overview

By the end of this article, we will have a better understanding of the following points:

- Using Language Models: The app centres on calling a large language model (LLM) to handle translation by sending prompts and receiving responses.

- Prompt Templates & OutputParsers: Prompt templates create flexible prompts for dynamic input, while output parsers ensure the LLM’s responses are formatted correctly.

- LangChain Expression Language (LCEL): LCEL chains together steps like creating prompts, sending them to the LLM, and processing outputs, enabling more complex workflows.

- Debugging with LangSmith: LangSmith helps monitor performance, trace data flow, and debug components as your app scales.

- Deploying with LangServe: LangServe allows you to deploy your app to the cloud, making it accessible to other users.

Table of contents

- Step-by-Step Guide for English to Japanese Translation App using LangChain and LangServe

- 1. Install Required Libraries

- 2. Setting up OpenAI GPT-4 Model for Translation

- 3. Using the Model for English to Japanese Translation

- 4. Use Output Parsers

- 5. Chaining Components Together

- 6. Using Prompt Templates for Translation

- 7. Chaining with LCEL (LangChain Expression Language)

- 8. Debugging with LangSmith

- 9. Deploying with LangServe

- 10. Running the Server

- 11. Interacting Programmatically with the API

- Frequently Asked Questions

Step-by-Step Guide for English to Japanese Translation App using LangChain and LangServe

Here are the steps to build an LLM Application with LCEL:

1. Install Required Libraries

Install the necessary libraries for LangChain and FastAPI:

!pip install langchain

!pip install -qU langchain-openai

!pip install fastapi

!pip install uvicorn

!pip install langserve[all]2. Setting up OpenAI GPT-4 Model for Translation

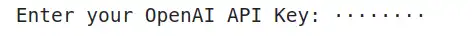

In your Jupyter Notebook, import the necessary modules and input your OpenAI API key:

import getpass

import os

os.environ["OPENAI_API_KEY"] = getpass.getpass('Enter your OpenAI API Key:')

Next, instantiate the GPT-4 model for the translation task:

from langchain_openai import ChatOpenAI

model = ChatOpenAI(model="gpt-4")3. Using the Model for English to Japanese Translation

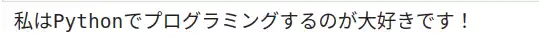

We will now define a system message to specify the translation task (English to Japanese) and a human message to input the text to be translated.

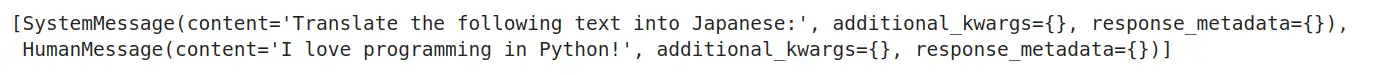

from langchain_core.messages import HumanMessage, SystemMessage

messages = [

SystemMessage(content="Translate the following from English into Japanese"),

HumanMessage(content="I love programming in Python!"),

]

# Invoke the model with the messages

response = model.invoke(messages)

response.content

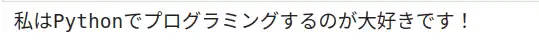

4. Use Output Parsers

The output of the model is more than just a string — it includes metadata. If we want to extract just the text of the translation, we can use an output parser:

from langchain_core.output_parsers import StrOutputParser

parser = StrOutputParser()

parsed_result = parser.invoke(response)

parsed_result

5. Chaining Components Together

Now let’s chain the model and the output parser together using the | operator:

The use of | to chain the model and parser allows for a more streamlined process, where the output of the model is directly processed by the parser, resulting in the final output (translated_text) being extracted directly from the model’s response. This approach enhances code readability and efficiency in handling data transformations.

- The | operator is used to combine the model and parser into single chain.

- This allows us to pass the output of the model directly into the parser, creating a streamlined process where we don’t have to manually handle the intermediate results.

- Here, the invoke() method is called on the chain.

- The message variable is passed as input to the chain. This input is typically some data(like text) that we want to process.

chain = model | parser

translated_text = chain.invoke(messages)

translated_text

6. Using Prompt Templates for Translation

To make the translation dynamic, we can create a prompt template. This way, we can input any English text for translation into Japanese.

from langchain_core.prompts import ChatPromptTemplate

system_template = "Translate the following text into Japanese:"

prompt_template = ChatPromptTemplate.from_messages([

('system', system_template),

('user', '{text}')

])

# Generate a structured message

result = prompt_template.invoke({"text": "I love programming in Python!"})

result.to_messages()

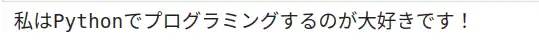

7. Chaining with LCEL (LangChain Expression Language)

We can now chain the prompt template, the language model, and the output parser to make the translation seamless:

chain = prompt_template | model | parser

final_translation = chain.invoke({"text": "I love programming in Python!"})

final_translation

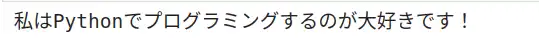

8. Debugging with LangSmith

To enable debugging and tracing with LangSmith, make sure your environment variables are set correctly:

os.environ["LANGCHAIN_TRACING_V2"] = "true"

os.environ["LANGCHAIN_API_KEY"] = getpass.getpass('Enter your LangSmith API Key: ')

LangSmith will help trace the workflow as your chain becomes more complex, showing each step in the process.

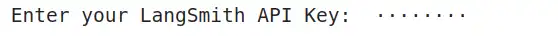

9. Deploying with LangServe

To deploy your English-to-Japanese translation app as a REST API using LangServe, create a new Python file (e.g., serve.py or Untitled7.py):

from fastapi import FastAPI

from langchain_core.prompts import ChatPromptTemplate

from langchain_core.output_parsers import StrOutputParser

from langchain_openai import ChatOpenAI

from langserve import add_routes

import os

from langchain_openai import ChatOpenAI

# Set the OpenAI API key

os.environ["OPENAI_API_KEY"] = "Put your api here"

# Create the model instance

model = ChatOpenAI()

# Set up the components

system_template = "Translate the following text into Japanese:"Langsmithapi

prompt_template = ChatPromptTemplate.from_messages([

('system', system_template),

('user', '{text}')

])

model = ChatOpenAI()

parser = StrOutputParser()

# Chain components

chain = prompt_template | model | parser

# FastAPI setup

app = FastAPI(title="LangChain English to Japanese Translation API", version="1.0")

add_routes(app, chain, path="/chain")

if __name__ == "__main__":

import uvicorn

uvicorn.run(app, host="localhost", port=8000)10. Running the Server

To run the server, execute the following command in the terminal:

python Untitled7.py

Your translation app will now be running at http://localhost:8000. You can test the API using the /chain/playground endpoint to interact with the translation API.

11. Interacting Programmatically with the API

You can interact with the API using LangServe’s RemoteRunnable:

from langserve import RemoteRunnable

remote_chain = RemoteRunnable("http://localhost:8000/chain/")

translated_text = remote_chain.invoke({"text": "I love programming in Python!"})

print(translated_text)

Conclusion

In this tutorial, we built an English-to-Japanese translation app using LangChain (LLM Application with LCEL). We created a flexible and scalable translation API by chaining components like prompt templates, language models, and output parsers. You can now modify it to translate into other languages or expand its functionality to include language detection or more complex workflows.

If you are looking for a Generative AI course online, then explore: GenAI Pinnacle Program

Frequently Asked Questions

Ans. LangChain is a framework that simplifies the process of working with language models (LLMs) by chaining various components such as prompt templates, language models, and output parsers. In this app, LangChain is used to build a translation workflow, from inputting text to translating it into another language.

Ans. The SystemMessage defines the task for the language model (e.g., “Translate the following from English into Japanese”), while the HumanMessage contains the actual text you want to translate.

Ans. A Prompt Template allows you to dynamically create a structured prompt for the LLM by defining placeholders (e.g., text to be translated) in the template. This makes the translation process flexible, as you can input different texts and reuse the same structure.

Ans. LCEL enables you to seamlessly chain components. In this app, components such as the prompt template, the language model, and the output parser are chained using the | operator. This simplifies the workflow by connecting different steps in the translation process.

Ans. LangSmith is a tool for debugging and tracing your LangChain workflows. As your app becomes more complex, LangSmith helps track each step and provides insights into performance and data flow, aiding in troubleshooting and optimization.