Six months ago, LLMs.txt was introduced as a groundbreaking file format designed to make website documentation accessible for large language models (LLMs). Since its release, the standard has steadily gained traction among developers and content creators. Today, as discussions around Model Context Protocols (MCP) intensify, LLMs.txt is in the spotlight as a proven, AI-first documentation solution that bridges the gap between human-readable content and machine-friendly data. In this article, we’ll explore the evolution of LLMs.txt, examine its structure and benefits, dive into technical integrations (including a Python module & CLI), and compare it to the emerging MCP standard.

Table of contents

- The Rise of LLMs.txt

- Community Buzz on Twitter

- What is an LLMs.txt File?

- Key Advantages of LLMs.txt

- How to Use LLMs.txt with AI Systems?

- Tools for Generating LLMs.txt Files

- Real-World Implementations & Versatility

- Python Module & CLI for LLMs.txt

- Python Source Code Overview

- Comparing LLMs.txt and MCP (Model Context Protocol)

- Conclusion

The Rise of LLMs.txt

Background and Context

Released six months ago, LLMs.txt was developed to address a critical challenge: traditional web files like robots.txt and sitemap.xml are designed for search engine crawlers—not for AI models that require concise, curated content. LLMs.txt provides a streamlined overview of website documentation, allowing LLMs to quickly understand essential information without being bogged down by extraneous details.

Key Points:

- Purpose: To distill website content into a format optimized for AI reasoning.

- Adoption: In its short lifespan, major platforms such as Mintlify, Anthropic, and Cursor have integrated LLMs.txt, highlighting its effectiveness.

- Current Trends: With the recent surge in discussions around MCPs (Model Context Protocols), the community is actively comparing these two approaches for enhancing LLM capabilities.

Community Buzz on Twitter

The conversation on Twitter reflects the rapid adoption of LLMs.txt and the excitement around its potential, even as the debate about MCPs grows:

- Jeremy Howard (@jeremyphoward):

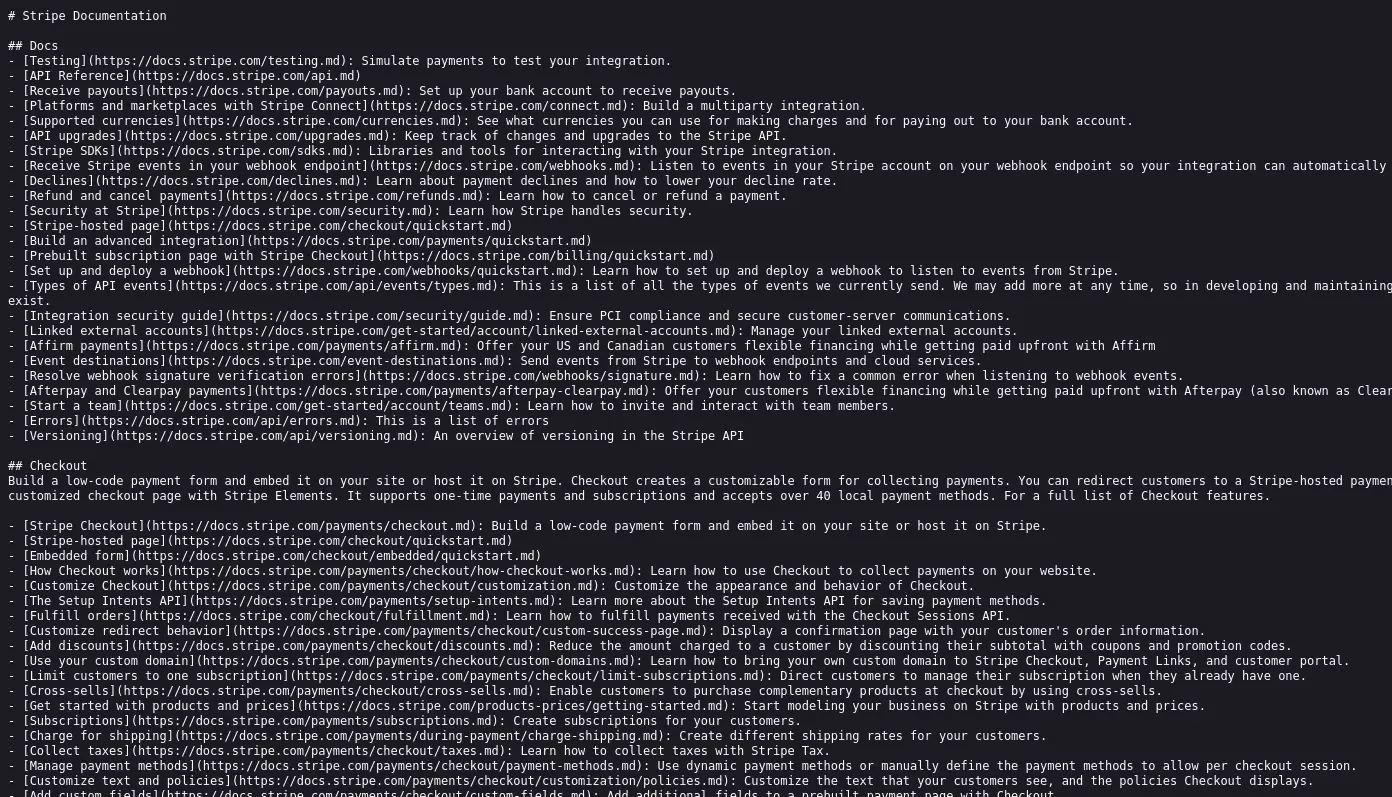

Celebrated the momentum, stating, “My proposed llms.txt standard really has got a huge amount of momentum in recent weeks.” He thanked the community—and notably @StripeDev—for their support, with Stripe now hosting LLMs.txt on their documentation site. - Stripe Developers (@StripeDev):

Announced that they’ve added LLMs.txt and Markdown to their docs (docs.stripe.com/llms.txt), enabling developers to easily integrate Stripe’s knowledge into any LLM.

- Developer Insights:

Tweets from developers like @TheSeaMouse, @xpression_app, and @gpjt have not only praised LLMs.txt but have also sparked discussions comparing it to MCP. Some users note that while LLMs.txt enhances content ingestion, MCP promises to make LLMs more actionable.

What is an LLMs.txt File?

LLMs.txt is a Markdown file with a structured format specifically designed for making website documentation accessible to LLMs. There are two primary versions:

/llms.txt

- Purpose:

Provides a high-level, curated overview of your site’s documentation. It helps LLMs quickly grasp the site’s structure and locate key resources. - Structure:

- An H1 with the name of the project or site. This is the only required section

- A blockquote with a short summary of the project, containing key information necessary for understanding the rest of the file

- Zero or more markdown sections (e.g. paragraphs, lists, etc) of any type except headings, containing more detailed information about the project and how to interpret the provided files

- Zero or more markdown sections delimited by H2 headers, containing “file lists” of URLs where further detail is available

- Each “file list” is a markdown list, containing a required markdown hyperlink [name](url), then optionally a: and notes about the file.

/llms-full.txt

- Purpose:

Contains the complete documentation content in one place, offering detailed context when needed. - Usage:

Especially useful for technical API references, in-depth guides, and comprehensive documentation scenarios.

Example Structure Snippet:

# Project Name

> Brief project summary

## Core Documentation

- [Quick Start](url): A concise introduction

- [API Reference](url): Detailed API documentation

## Optional

- [Additional Resources](url): Supplementary informationKey Advantages of LLMs.txt

LLMs.txt offers several distinct benefits over traditional web standards:

- Optimized for LLM Processing:

It eliminates non-essential elements like navigation menus, JavaScript, and CSS, focusing solely on the critical content that LLMs need. - Efficient Context Management:

Given that LLMs operate within limited context windows, the concise format of LLMs.txt ensures that only the most pertinent information is used. - Dual Readability:

The Markdown format makes LLMs.txt both human-friendly and easily parsed by automated tools. - Complementary to Existing Standards:

Unlike sitemap.xml or robots.txt—which serve different purposes—LLMs.txt provides a curated, AI-centric view of documentation.

How to Use LLMs.txt with AI Systems?

To leverage the benefits of LLMs.txt, its content must be fed into AI systems manually. Here’s how different platforms integrate LLMs.txt:

ChatGPT

- Method:

Users copy the URL or full content of the /llms-full.txt file into ChatGPT, enriching the context for more accurate responses. - Benefits:

This method enables ChatGPT to reference detailed documentation when needed.

Claude

- Method: Since Claude currently lacks direct browsing capabilities, users can paste the content or upload the file, ensuring comprehensive context is provided.

- Benefits: This approach grounds Claude’s responses in reliable, up-to-date documentation.

Cursor

- Method: Cursor’s @Docs feature allows users to add an LLMs.txt link, integrating external content seamlessly.

- Benefits: Enhances the contextual awareness of Cursor, making it a robust tool for developers.

Tools for Generating LLMs.txt Files

Several tools streamline the creation of LLMs.txt files, reducing manual effort:

- Mintlify: Automatically generates both /llms.txt and /llms-full.txt files for hosted documentation, ensuring consistency.

Look into this too: https://mintlify.com/docs/quickstart

- llmstxt by dotenv: Converts your site’s sitemap.xml into a compliant LLMs.txt file, integrating seamlessly with your existing workflow.

- llmstxt by Firecrawl: Utilizes web scraping to compile your website content into an LLMs.txt file, minimizing manual intervention.

Real-World Implementations & Versatility

The versatility of LLMs.txt files is evident from real-world projects like FastHTML, which follow both proposals for AI-friendly documentation:

- FastHTML Documentation:

The FastHTML project not only uses an LLMs.txt file to provide a curated overview of its documentation but also offers a regular HTML docs page with the same URL appended by a .md extension. This dual approach ensures that both human readers and LLMs can access the content in the most suitable format. - Automated Expansion:

The FastHTML project opts to automatically expand its LLMs.txt file into two markdown files using an XML-based structure. These two files are:- llms-ctx.txt: Contains the context without the optional URLs.

- llms-ctx-full.txt: Includes the optional URLs for a more comprehensive context.

- These files are generated using the llms_txt2ctx command-line application, and the FastHTML documentation provides detailed guidance on how to use them.

- Versatility Across Applications:

Beyond technical documentation, LLMs.txt files are proving useful in a variety of contexts—from helping developers navigate software documentation to enabling businesses to outline their structures, breaking down complex legislation for stakeholders, and even providing personal website content (e.g., CVs).

Notably, all nbdev projects now generate .md versions of all pages by default. Similarly, Answer.AI and fast.ai projects using nbdev have their docs regenerated with this feature—exemplified by the markdown version of fastcore’s documents module.

Python Module & CLI for LLMs.txt

For developers looking to integrate LLMs.txt into their workflows, a dedicated Python module and CLI are available to parse LLMs.txt files and create XML context documents suitable for systems like Claude. This tool not only makes it easy to convert documentation into XML but also provides both a command-line interface and a Python API.

Install

pip install llms-txtHow to use it?

CLI

After installation, llms_txt2ctx is available in your terminal.

To get help for the CLI:

llms_txt2ctx -hTo convert an llms.txt file to XML context and save to llms.md:

llms_txt2ctx llms.txt > llms.mdPass –optional True to add the ‘optional’ section of the input file.

Python module

from llms_txt import *

samp = Path('llms-sample.txt').read_text()Use parse_llms_file to create a data structure with the sections of an llms.txt file (you can also add optional=True if needed):

parsed = parse_llms_file(samp)

list(parsed)

['title', 'summary', 'info', 'sections']

parsed.title,parsed.summaryImplementation and tests

To show how simple it is to parse llms.txt files, here’s a complete parser in <20 lines of code with no dependencies:

from pathlib import Path

import re,itertools

def chunked(it, chunk_sz):

it = iter(it)

return iter(lambda: list(itertools.islice(it, chunk_sz)), [])

def parse_llms_txt(txt):

"Parse llms.txt file contents in `txt` to a `dict`"

def _p(links):

link_pat = '-\s*\[(?P<title>[^\]]+)\]\((?P<url>[^\)]+)\)(?::\s*(?P<desc>.*))?'

return [re.search(link_pat, l).groupdict()

for l in re.split(r'\n+', links.strip()) if l.strip()]

start,*rest = re.split(fr'^##\s*(.*?$)', txt, flags=re.MULTILINE)

sects = {k: _p(v) for k,v in dict(chunked(rest, 2)).items()}

pat = '^#\s*(?P<title>.+?$)\n+(?:^>\s*(?P<summary>.+?$)$)?\n+(?P<info>.*)'

d = re.search(pat, start.strip(), (re.MULTILINE|re.DOTALL)).groupdict()

d['sections'] = sects

return dWe have provided a test suite in tests/test-parse.py and confirmed that this implementation passes all tests.

Python Source Code Overview

The llms_txt Python module provides the source code and helpers needed to create and use llms.txt files. Here’s a brief overview of its functionality:

- File Specification: The module adheres to the llms.txt spec: an H1 title, a blockquote summary, optional content sections, and H2-delimited sections containing file lists.

- Parsing Helpers: Functions such as parse_llms_file break down the file into a simple data structure (with keys like title, summary, info, and sections).

For example, parse_link(txt) extracts the title, URL, and optional description from a markdown link. - XML Conversion: Functions like create_ctx and mk_ctx convert the parsed data into an XML context file, which is especially useful for systems like Claude.

- Command-Line Interface: The CLI command llms_txt2ctx uses these helpers to process an llms.txt file and output an XML context file. This tool simplifies integrating llms.txt into various workflows.

- Concise Implementation: The module even includes a parser implemented in under 20 lines of code, leveraging regex and helper functions like chunked for efficient processing.

For more details, you can refer to this link – https://llmstxt.org/core.html

Comparing LLMs.txt and MCP (Model Context Protocol)

While both LLMs.txt and the emerging Model Context Protocol (MCP) aim to enhance LLM capabilities, they tackle different challenges in the AI ecosystem. Below is an enhanced comparison that dives deeper into each approach:

LLMs.txt

- Objective:

Focuses on providing LLMs with clean, curated content by distilling website documentation into a structured Markdown format. - Implementation:

A static file maintained by site owners, ideal for technical documentation, API references, and comprehensive guides. - Benefits:

- Simplifies content ingestion.

- Easy to implement and update.

- Enhances prompt quality by filtering out unnecessary elements.

MCP (Model Context Protocol)

What is MCP?

MCP is an open standard that creates secure, two-way connections between your data and AI-powered tools. Think of it as a USB-C port for AI applications—a single, common connector that lets different tools and data sources “talk” to each other.

Why MCP Matter?

As AI assistants become integral to our daily workflow (consider Replit, GitHub Copilot, or Cursor IDE), ensuring they have access to all the context they need is crucial. Today, integrating new data sources often requires custom code, which is messy and time-consuming. MCP simplifies this by:

- Offering Pre-built Integrations: A growing library of ready-to-use connectors.

- Providing Flexibility: Enabling seamless switching between different AI providers.

- Enhancing Security: Ensuring that your data remains secure within your infrastructure.

How Does MCP Work?

MCP follows a client-server architecture:

- MCP Hosts: Programs (like Claude Desktop or popular IDEs) that want to access data.

- MCP Clients: Components maintaining a 1:1 connection with MCP servers.

- MCP Servers: Lightweight adapters that expose specific data sources or tools.

- Connection Lifecycle:

- Initialization: Exchange of protocol versions and capabilities.

- Message Exchange: Supporting request-response patterns and notifications.

- Termination: Clean shutdown, disconnection, or handling errors.

Real-World Impact and Early Adoption

Imagine if your AI tool could seamlessly access your local files, databases, or remote services without writing custom code for every connection. MCP promises exactly that—simplifying how AI tools integrate with diverse data sources. Early adopters are already experimenting with MCP in various environments, streamlining workflows and reducing development overhead.

Read more about MCP: Model Context Protocol – Analytics Vidhya

Shared Motives and Key Differences

Common Goal: Both LLMs.txt and MCP aim to empower LLMs but they do so in complementary ways. LLMs.txt improves content ingestion by providing a curated, streamlined view of website documentation, while MCP extends LLM functionality by enabling them to execute real-world tasks. In essence, LLMs.txt helps LLMs “read” better, and MCP helps them “act” effectively.

Nature of the Solutions

- LLMs.txt:

- Static, Curated Content Standard: LLMs.txt is designed as a static file that adheres to a strict Markdown-based structure. It includes an H1 title, a blockquote summary, and H2-delimited sections with curated link lists.

- Technical Advantages:

- Token Efficiency: By filtering out non-essential details (such as navigation menus, JavaScript, and CSS), LLMs.txt compresses complex web content into a succinct format that fits within the limited context windows of LLMs.

- Simplicity: Its format is easy to generate and parse using standard text processing tools (e.g., regex and Markdown parsers), making it accessible to a wide range of developers.

- Enhancing Capabilities:

- Improves the quality of context provided to an LLM, leading to more accurate reasoning and better response generation.

- Facilitates testing and iterative refinement since its structure is predictable and machine-readable.

- MCP (Model Context Protocol):

- Dynamic, Action-Enabling Protocol: MCP is a robust, open standard that creates secure, two-way communications between LLMs and external data sources or services.

- Technical Advantages:

- Standardized API Interfacing: MCP acts like a universal connector—similar to a USB-C port—allowing LLMs to interface seamlessly with diverse data sources (local files, databases, remote APIs, etc.) without custom code for each integration.

- Real-Time Interaction: Through a client-server architecture, MCP supports dynamic request-response cycles and notifications, enabling LLMs to fetch real-time data and execute tasks (e.g., sending emails, updating spreadsheets, or triggering workflows).

- Complexity Handling: MCP must address challenges like authentication, error handling, and asynchronous communication, making it more engineering-intensive but also far more versatile in extending LLM capabilities.

- Enhancing Capabilities:

- Transforms LLMs from passive text generators into active, task-performing assistants.

- Facilitates seamless integration of LLMs into business processes and development workflows, boosting productivity through automation.

Ease of Implementation

- LLMs.txt:

- Is relatively straightforward to implement. Its creation and parsing rely on lightweight text processing techniques that require minimal engineering overhead.

- Can be maintained manually or via simple automation tools.

- MCP:

- Requires a robust engineering effort. Implementing MCP involves designing secure APIs, managing a client-server architecture, and continuously maintaining compatibility with evolving external service standards.

- Involves developing pre-built connectors and handling the complexities of real-time data exchange.

Together: These innovations represent complementary strategies in enhancing LLM capabilities. LLMs.txt ensures that LLMs have a high-quality, condensed snapshot of essential content—greatly improving comprehension and response quality. Meanwhile, MCP elevates LLMs by allowing them to bridge the gap between static content and dynamic action, ultimately transforming them from mere content analyzers into interactive, task-performing systems.

Conclusion

LLMs.txt, released six months ago, has already carved out a vital niche in the realm of AI-first documentation. By providing a curated, streamlined method for LLMs to ingest and understand complex website documentation, LLMs.txt significantly enhances the accuracy and reliability of AI responses. Its simplicity and token efficiency have made it an invaluable tool for developers and content creators alike.

At the same time, the emergence of Model Context Protocols (MCP) marks the next evolution in LLM capabilities. MCP’s dynamic, standardized approach allows LLMs to seamlessly access and interact with external data sources and services transforming them from passive readers into active, task-performing assistants. Together, LLMs.txt and MCP represent a powerful synergy: while LLMs.txt ensures that AI models have the best possible context, MCP provides them with the means to act upon that context.

Looking ahead, the future of AI-driven documentation and automation appears increasingly promising. As best practices and tools continue to evolve, developers can expect more integrated, secure, and efficient systems that not only enhance the capabilities of LLMs but also redefine how we interact with digital content. Whether you’re a developer striving for innovation, a business owner aiming to optimize workflows, or an AI enthusiast eager to explore the frontier of technology, now is the time to dive into these standards and unlock the full potential of AI-first documentation.